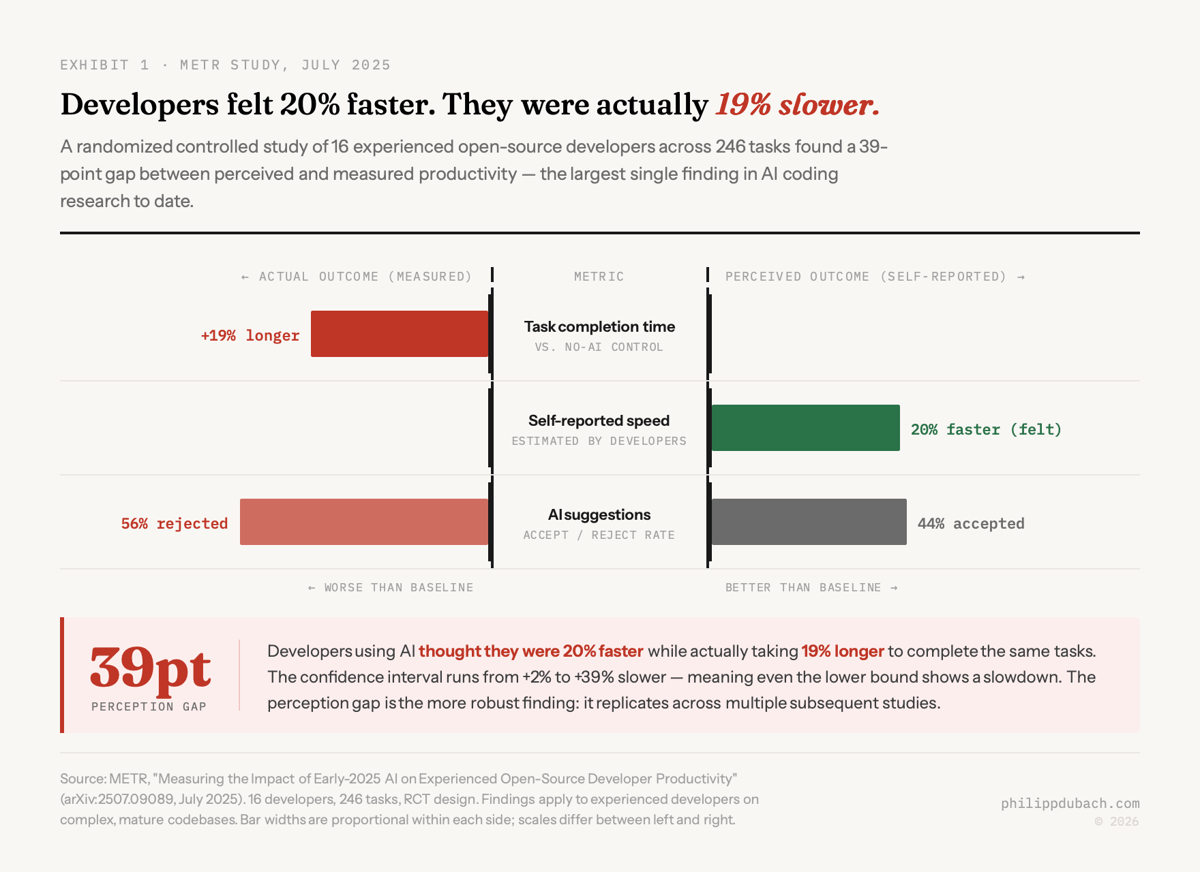

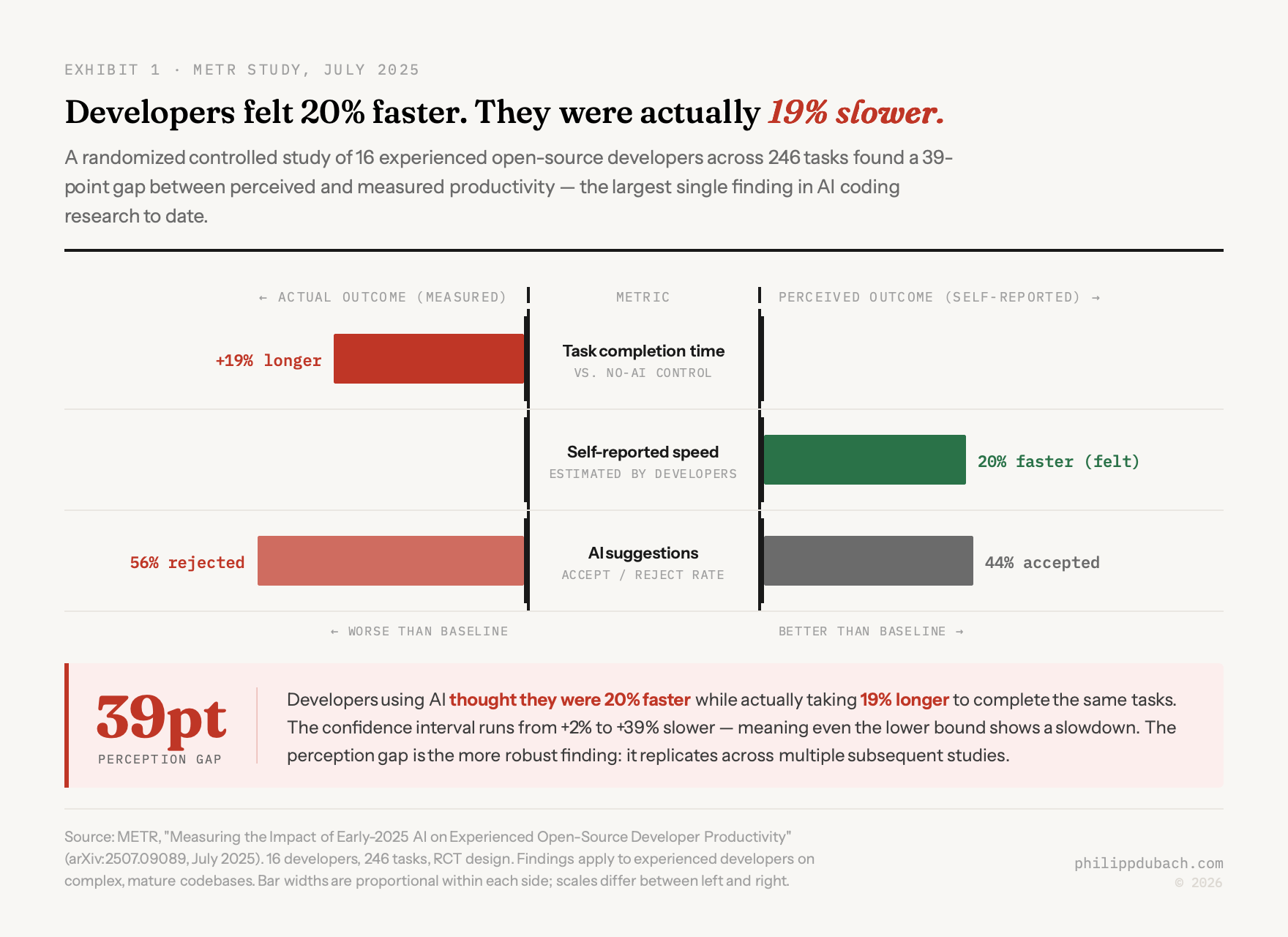

A study published in July 2025 gave AI coding tools their most credible test yet. Sixteen experienced open-source developers, 246 real tasks, randomized controlled design. The researchers expected to measure how much faster AI made them. What they found: developers using AI took 19% longer to complete tasks than those working without it.

The developers themselves thought they were 20% faster.

That 39-point gap between perception and reality is the most important number in METR’s paper. It lands inside two years of adoption data pointing in the opposite direction. DX surveyed 121,000 developers across 450+ companies and found 92.6% use AI coding tools at least monthly. JetBrains’ AI Pulse measured 93%. The DORA 2025 report put it at 90%. On the productivity side: six independent research efforts converge on roughly the same ceiling, 10% at the system level, if you’re being generous.

The bottleneck was never the typing

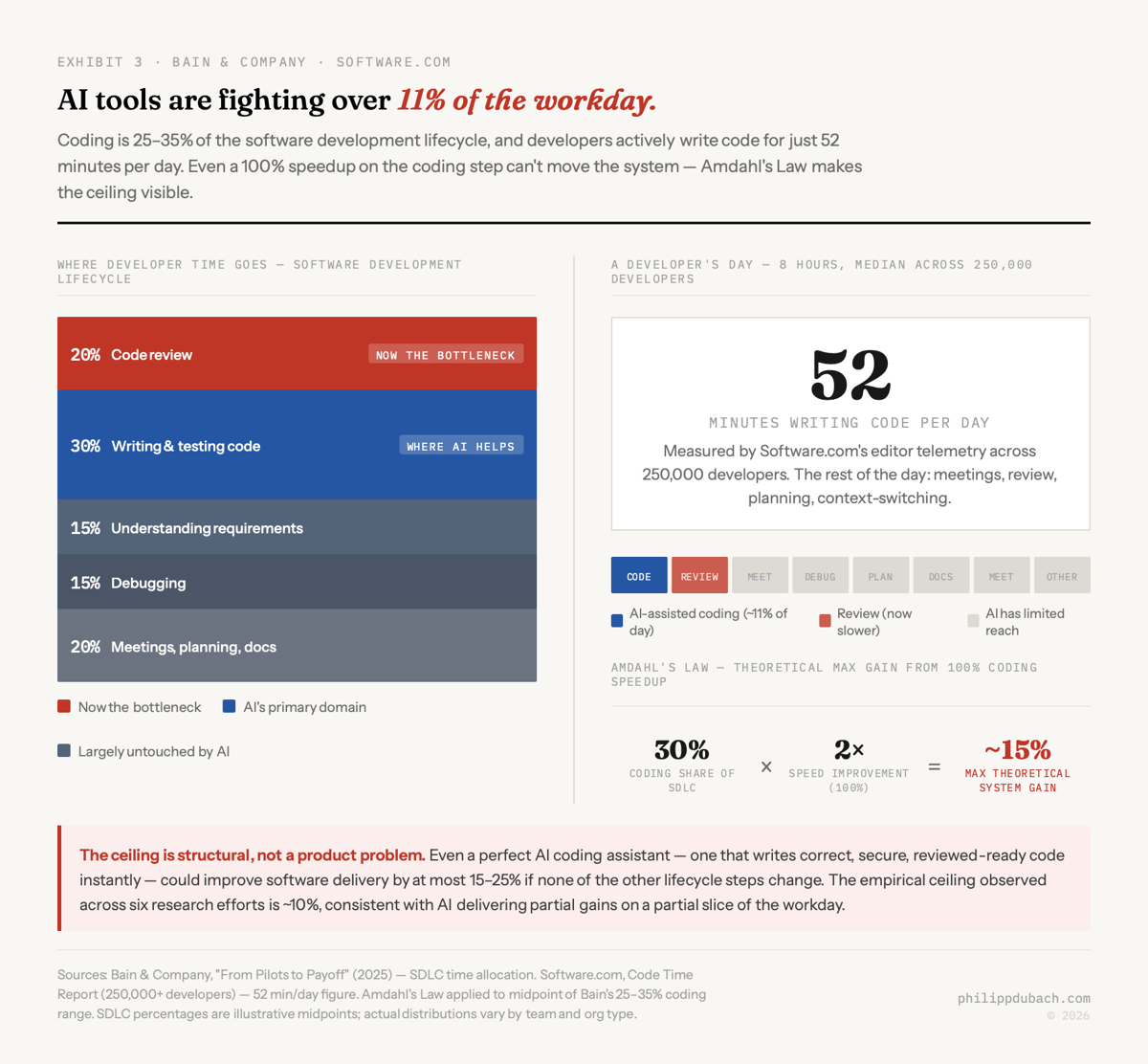

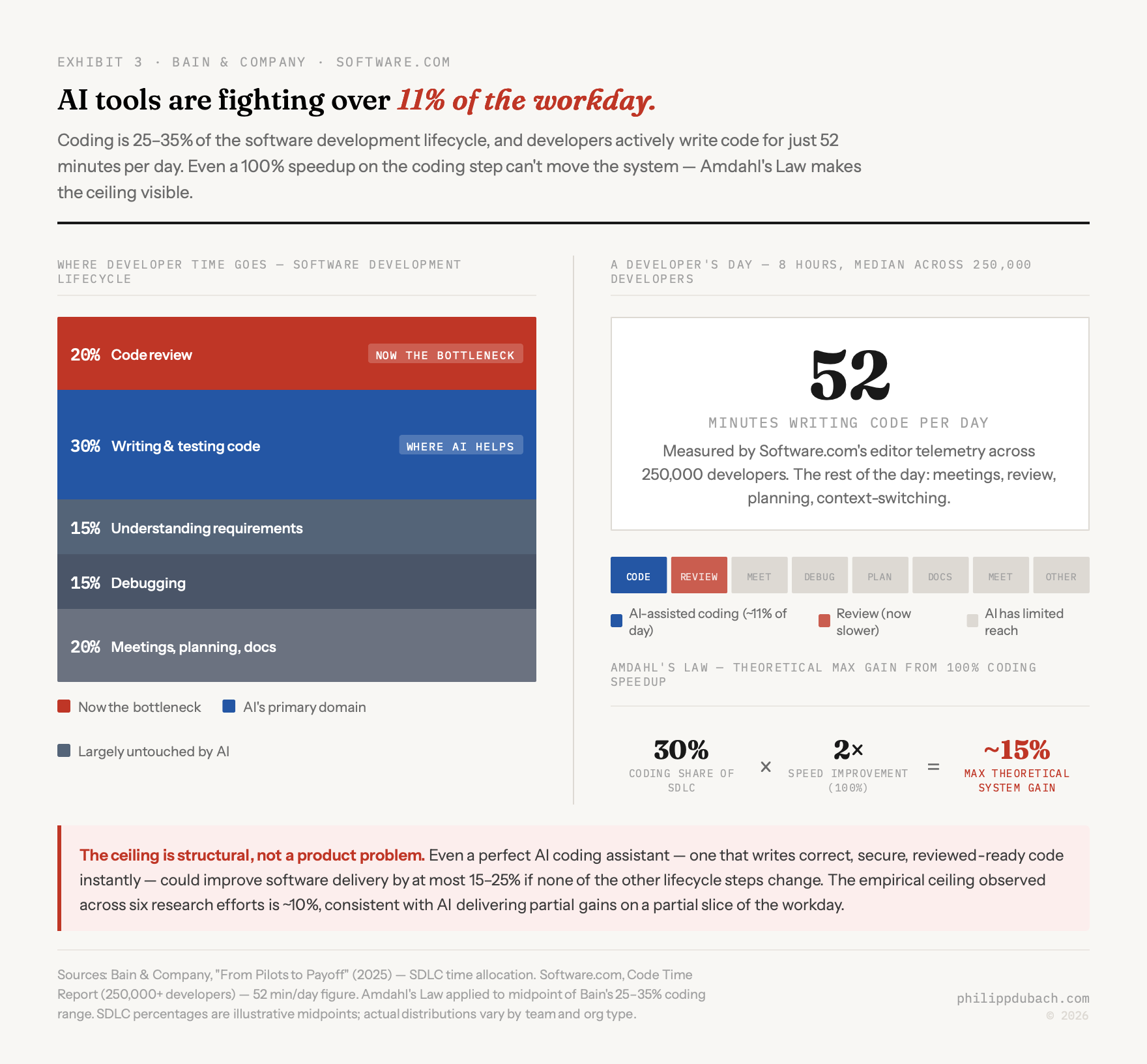

Goldratt’s Theory of Constraints makes the following prediction: optimizing a step that isn’t the bottleneck doesn’t improve system throughput. You can make the fastest machine on the factory floor twice as fast. If it’s feeding a queue that’s already backed up, you’ve accomplished nothing at the output level.

Writing code has never been that bottleneck. Bain’s analysis found that writing and testing code accounts for roughly 25-35% of the total software development lifecycle. The rest goes to code review, understanding requirements, debugging, meetings, documentation. Even with a 100% speedup on the coding step, that gives you a 15-25% overall improvement, and that’s before accounting for what happens downstream when you generate a lot more code. Gergely Orosz, who runs The Pragmatic Engineer, put it directly:

Speed of typing out code has never been the bottleneck for software development.

What the data shows now is that AI tools don’t just fail to clear the bottleneck. They move it downstream and make it worse.

The code review bottleneck

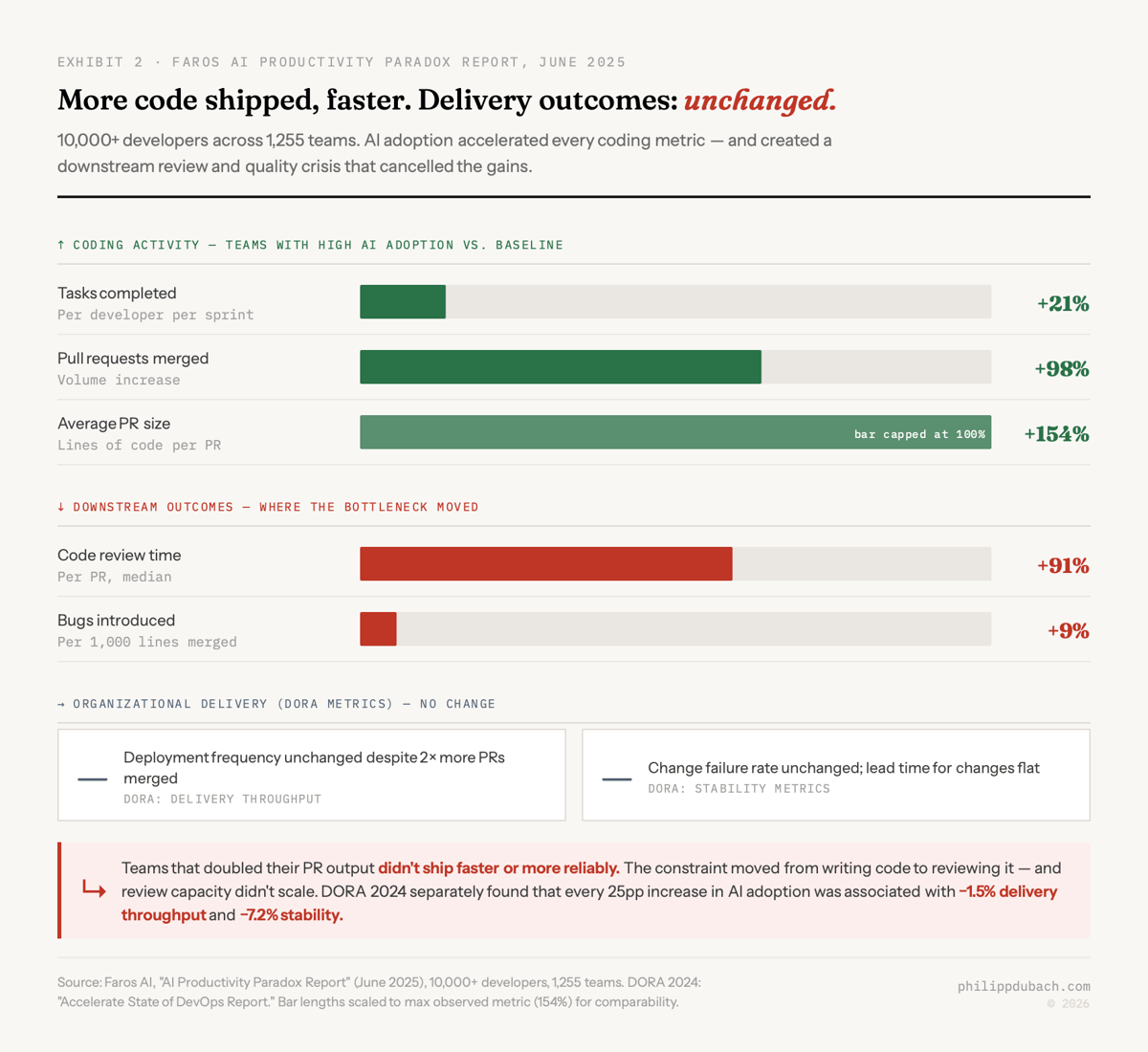

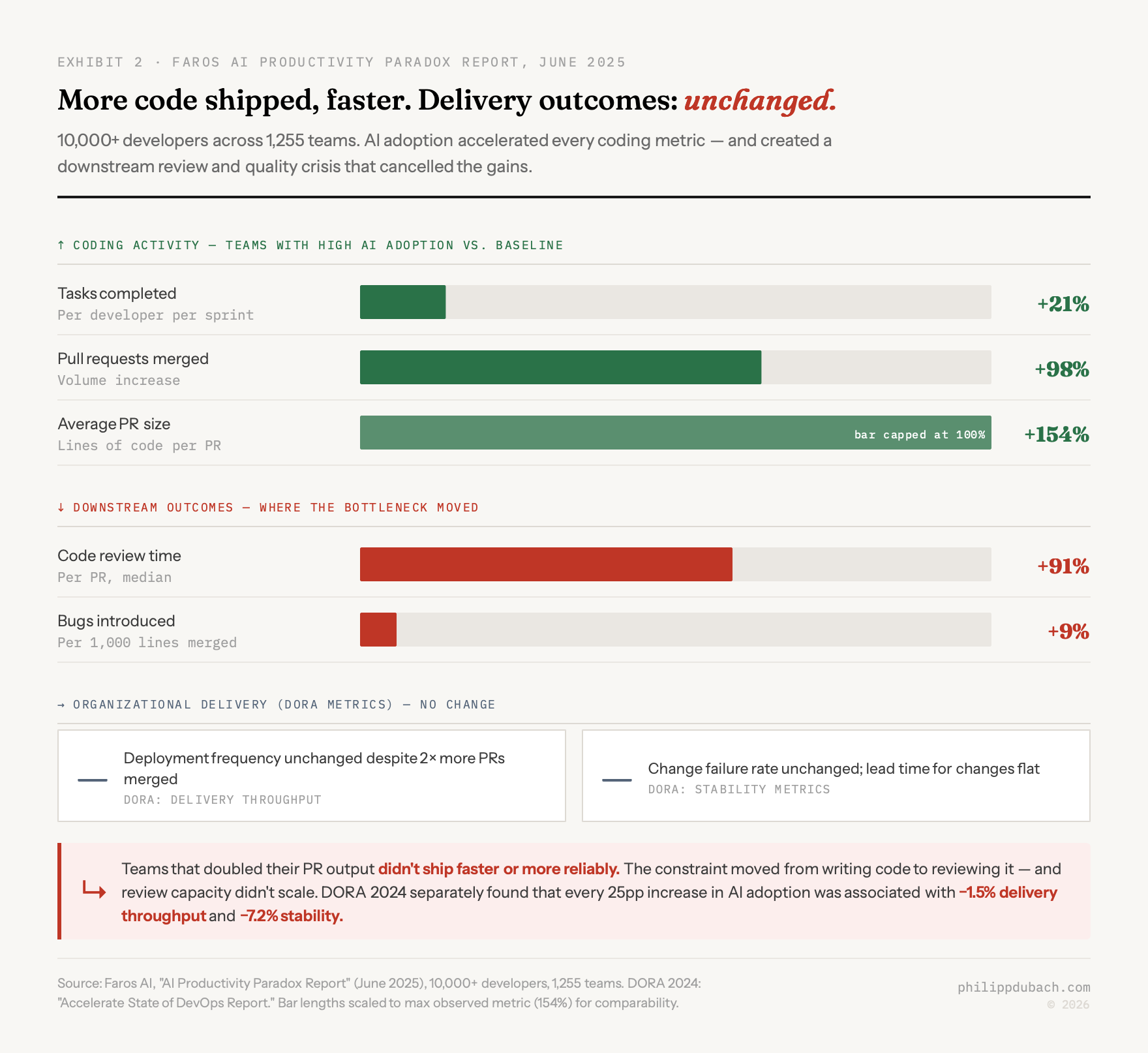

Faros AI measured this across 10,000+ developers on 1,255 teams in June 2025. Teams with high AI adoption completed 21% more tasks and merged 98% more pull requests. PR size grew 154%. Then: review time up 91%, bugs up 9%, organizational DORA metrics flat.

More PRs, bigger PRs, slower reviews, more bugs, no throughput improvement. The coding step accelerated. The review step, already a constraint, got worse. Michael Truell, Cursor’s CEO:

Cursor has made it much faster to write production code. However, for most engineering teams, reviewing code looks the same as it did three years ago

Cursor then acquired Graphite, a code review startup. The acquisition is a more honest statement about where the constraint lives than anything in Cursor’s marketing. The DORA 2024 report found that for every 25 percentage point increase in AI adoption, delivery throughput dropped 1.5% and delivery stability dropped 7.2%. DORA 2025, at 90% adoption, put it tersely: “AI doesn’t fix a team; it amplifies what’s already there.” The negative relationship with stability holds even as adoption saturates.

41% of what?

One number circulates constantly in press coverage: 41% of code is now AI-generated. It comes from Emad Mostaque, who took GitHub’s figure about the share of code accepted by Copilot users and extrapolated it into a claim about all code everywhere. The original figure applied only to developers already using Copilot, a fraction of GitHub’s user base at the time. The extrapolation doesn’t hold.

The more defensible numbers: DX’s measurement across 4.2 million developers puts AI-generated production code at 26.9%. A study published in Science found roughly 30% of Python functions from U.S. contributors on GitHub were AI-generated by late 2024. Sundar Pichai said more than a quarter of all new code at Google is AI-generated. These numbers cluster around 25-30%.

The inflated figure matters because it supports a specific argument: that AI has already crossed some threshold, that the transformation is done, that the productivity gains are already baked in. At 27%, AI is a meaningful contributor to software production. At 41%, you’re telling a different story, and the decisions that follow from it are different decisions.

The quality picture at 27% is not reassuring. Veracode tested 100+ LLMs across 80 coding tasks and found 45% of AI-generated code introduced OWASP Top 10 vulnerabilities. CodeRabbit’s analysis found AI-generated code contains 2.74x more security vulnerabilities than human-written code. Black Duck’s 2026 OSSRA report found vulnerabilities per codebase up 107% year over year, the mean codebase going from 280 to 581 known vulnerabilities. Martin Fowler’s framing is still the most honest I’ve seen: “Treat every slice as a PR from a rather dodgy collaborator who’s very productive in the lines-of-code sense, but you can’t trust a thing they’re doing.”

Perception is reality

The 19% slowdown number has been contested, fairly: the CI is wide (+2% to +39%), the study covered experienced developers on complex codebases, and METR has acknowledged design limitations. In February 2026, METR published an update changing their experiment design after discovering that 30-50% of invited developers declined to participate without AI access, a selection effect that biased the original sample toward developers who benefit least from AI. Their newer cohort (800+ tasks, 57 developers) showed a -4% slowdown with a CI of -15% to +9%, substantially less negative. METR’s conclusion: “AI likely provides productivity benefits in early 2026.” The perception gap and the bottleneck problem remain real, but the exact magnitude of the July 2025 finding should be read with that caveat.

METR’s companion Horizon benchmark (Kwa et al., 2025) puts numbers to that curve: the 50%-task-completion time horizon for Claude 3.7 Sonnet was 60 minutes. Claude Opus 4.6, released February 2026, reached 719 minutes. The doubling time from 2023 is approximately 128 days. METR frames the productivity result as a point on that trend, not a fixed constant, though they also note that their benchmark tasks are cleaner than real production work and performance on “messier” tasks may improve more slowly. But the perception gap itself is more robust than the exact slowdown figure, and it replicates.

Stack Overflow’s 2025 Developer Survey found favorable views of AI tools dropped from 70% to 60%, with 46% not trusting AI output and 66% citing “almost right but not quite” as their top frustration. Software.com’s monitoring of 250,000 developers found the median developer codes for 52 minutes per day, about 11% of a 40-hour week. The tools are fighting over 11% of the workday.

A field experiment across 4,867 developers from MIT, Princeton, Wharton, and Microsoft found that above-median-tenure developers showed no significant productivity increase from AI tools. The people capable of using AI most effectively are also the people most likely to catch when it’s wrong and fix it. It’s why the tools work better for junior developers on simple tasks than for senior developers on the things that actually matter most.

GitHub’s 2022 Copilot study

GitHub’s 2022 Copilot study, the “55% faster” figure, still appears in enterprise sales decks in 2026. One JavaScript task: implementing a web server with HTTP endpoints. Thirty-five completers. No assessment of output quality, test coverage, or whether the code would survive production. Confidence interval: 21% to 89%. Participants knew they were being timed for productivity.

What the study actually shows is that when you pick a task specifically suited to AI assistance and measure completion time without checking correctness, AI looks fast. That’s a real finding. It’s just not the one being used to justify eight-figure licensing deals.

Macro data

Apollo’s Torsten Slok wrote in early 2026: “AI is everywhere except in the incoming macroeconomic data.” An NBER paper from February 2026 surveying nearly 6,000 executives found over 80% of firms reported AI had no impact on productivity over the preceding three years. Expected improvement over the next three: 1.4%.

Daron Acemoglu, who shared the 2024 Nobel Prize in Economics partly for his work on technology and labor markets, projected a 0.5% total factor productivity increase from AI over the next decade. His reasoning: the economic value of AI concentrates in a narrow set of tasks that don’t represent enough of total economic activity to move aggregate numbers. The Bain arithmetic, at macroeconomic scale.

The standard optimist response is the IT comparison: computers entered enterprises in the 1970s and 1980s without producing measurable productivity improvements for a decade, then the gains came in the mid-1990s. It’s a reasonable historical parallel. I’m genuinely uncertain whether it applies. Computers replaced manual processes wholesale. AI coding tools are a faster ingredient inside a process whose other ingredients haven’t changed: the requirements still need to be understood, the review still needs to happen, the tests still need to pass. The productivity lag might resolve. Or the structure of the workflow might mean it doesn’t, even eventually. I don’t know, and the honest answer is that nobody does yet.

Where the value actually lands

Exploration is faster. When I’m working on something unfamiliar, a library I haven’t used, an API I’m integrating for the first time, the startup cost drops. A working first draft arrives in minutes rather than hours. That’s real, and I notice it. Whether it shows up in throughput metrics is a different question, and the data suggests mostly not, because the constraint was never the first draft.

Boilerplate, test scaffolding, documentation: these genuinely benefit too. The tasks that are well-scoped and low-stakes if approximately wrong are where these tools earn their keep. Anyone who’s used them seriously already knew this before the research said so.

Simon Willison, in an NPR interview: “Our job is not to type code into a computer. Our job is to deliver systems that solve problems.” The tools handle the first part better than they did a year ago. The second part hasn’t changed.

The right question

The useful product question, if the bottleneck is now review, is what makes review faster and more reliable, not what generates more code faster. AI tools that flag security issues, catch logic errors, and surface context about why code was written a certain way would attack the actual constraint. This is at least part of what Cursor is working toward with Graphite.

The harder problem is cultural. Bain and DORA say the same thing from different angles: AI amplifies what’s already there. Teams with good review practices and clear requirements get leverage. Teams without them produce more code that still doesn’t ship on time. The organizations that most want a tool to fix their velocity tend to be the ones with the process debt that prevents any tool from working.

I have no idea what the five-year picture looks like. The Solow paradox took a decade to resolve and resolved in ways nobody expected. Maybe the AI productivity gains show up in 2029 and the 2026 skeptics look naive. Genuinely possible. I try to hold that view honestly rather than dismiss it.

What the data shows now: at 92.6% monthly adoption and roughly 27% of production code AI-generated, the experiment has run at real scale. Organizational throughput hasn’t moved past 10%. Experienced developers are slower with AI assistance than without it. Bugs are up, review times are up, code quality metrics are declining, and DORA stability goes the wrong way as adoption increases.