On March 26, 2025, Sam Altman posted the following three sentences

people love MCP and we are excited to add support across our products.

MCP is Anthropic’s Model Context Protocol. OpenAI is Anthropic’s most direct competitor. Altman was endorsing a rival’s standard. That post may be the most significant event in enterprise AI infrastructure this year. When your main competitor adopts your protocol, the war is close to over. I’ve been watching this play out since Anthropic launched MCP in November 2024, and I want to work through what’s happening: who controls what, what “interoperability” means in practice, and whether any of this follows patterns we’ve seen before.

What is MCP

MCP is a client-server protocol, licensed MIT, built on JSON-RPC 2.0. The mental model is simple: an AI agent (the host) connects through a client to MCP servers that expose tools, data sources, and context. Instead of building a bespoke integration every time Claude or GPT needs to talk to Salesforce, GitHub, or your internal database, you build one MCP server. Any compatible host can then use it.

The problem it solves, which explains why it spread so fast, is that without a standard like this, integration complexity grows quadratically. Every new AI model times every new tool equals a new custom integration. MCP tries to make it linear.

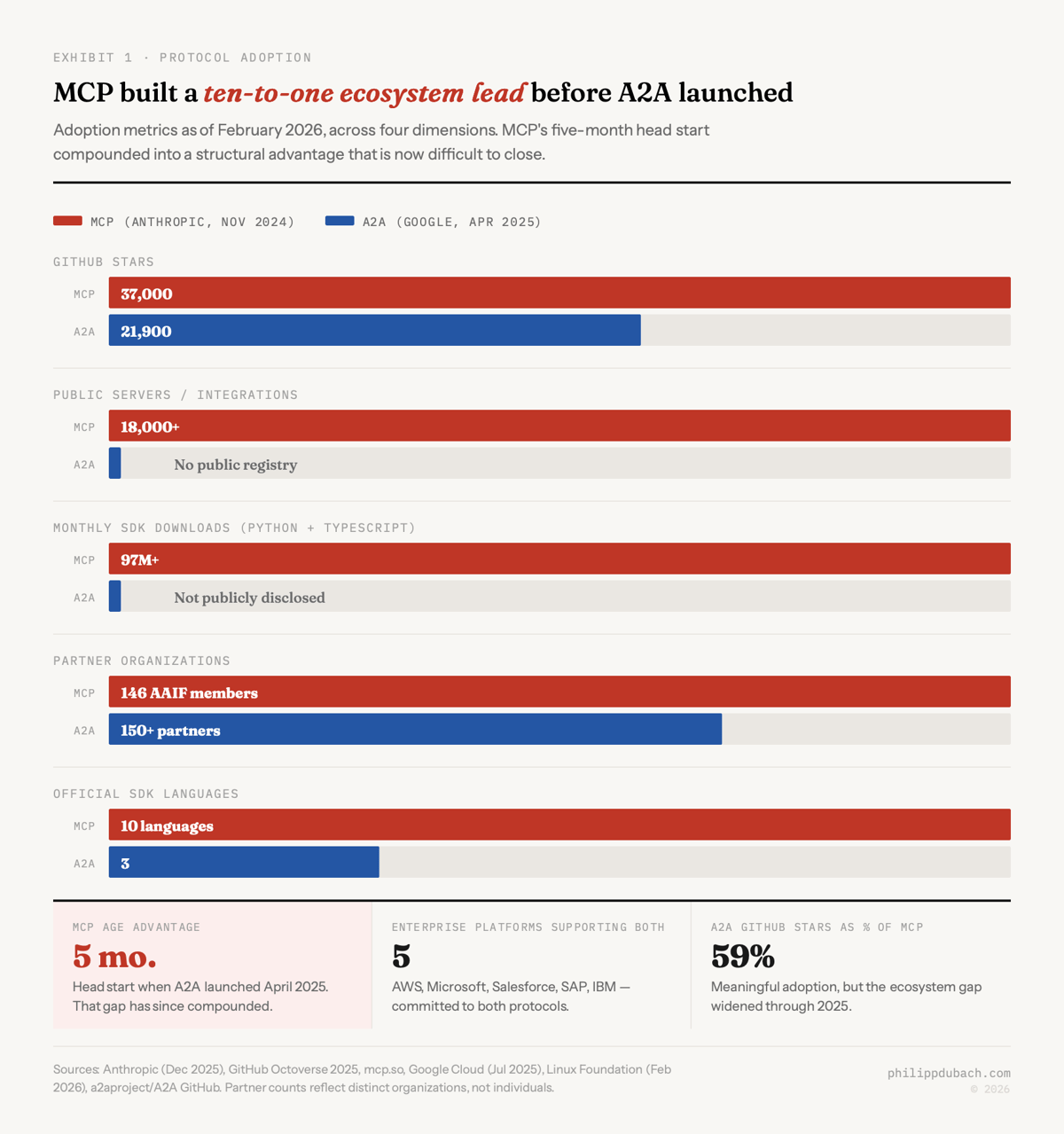

By December 2025, Anthropic’s own count put the public MCP server ecosystem at 10,000+ active servers and 97 million monthly SDK downloads across the Python and TypeScript SDKs. GitHub’s 2025 Octoverse report flagged MCP as a standout, hitting 37,000 stars in eight months. The unofficial registry mcp.so lists over 18,000 servers. Official SDKs now cover ten languages, including Python, TypeScript, Java, C#, Go, Kotlin, Rust, and Swift.

The companies building MCP integrations: Microsoft, Salesforce, Cloudflare, GitHub, Stripe, Atlassian, Figma, Snowflake, Databricks, New Relic. At Cloudflare’s MCP Demo Day in May 2025, Asana, PayPal, Sentry, and Webflow all shipped remote servers in a single afternoon. Gartner predicts 75% of API gateway vendors will have MCP features by 2026.

OpenAI’s adoption went beyond Altman’s post. MCP support rolled out across their Agents SDK (March 2025), Responses API (May 2025), Realtime API (August 2025), and ChatGPT Developer Mode (September 2025). The two companies later co-authored the MCP Apps Extension. You don’t see that often between direct competitors.

One performance claim circulates in blog posts and marketing materials: that organizations implementing MCP report “40–60% faster agent deployment times.” I have not found a primary source for this. No survey, no case study, no named company. I’d treat it as marketing content until someone produces the underlying data.

Google’s A2A fills a different layer

Google launched A2A, the Agent-to-Agent protocol, at Cloud Next on April 9, 2025, five months after MCP. Google didn’t position A2A as MCP replacement. They called it a complement. I think that’s honest, but it takes a minute to see why.

MCP connects an agent to tools; A2A connects agents to each other, the two protocols produce different behavior.

When an MCP host calls an MCP server, it knows exactly what it’s getting: structured tool descriptions, specific function signatures, predictable outputs. The agent can see inside the tool. A2A works differently. Agents remain opaque to each other. An A2A agent publishes an “Agent Card,” a JSON metadata document at a well-known URL, describing its capabilities and authentication requirements. Other agents discover it, negotiate tasks through a defined lifecycle (submitted, working, input-required, completed), and collaborate without sharing memory or internal state.

Google’s own documentation uses a repair shop analogy. MCP is how the mechanic uses diagnostic equipment. A2A is how the customer talks to the shop manager, or how the manager coordinates with a parts supplier. It works: both conversations happen in a real repair shop, and cutting either one doesn’t simplify anything.

A2A launched with 50+ partner organizations and grew to 150+ by July 2025. The list includes Atlassian, Salesforce, SAP, ServiceNow, McKinsey, BCG, Accenture. Google donated A2A to the Linux Foundation in June 2025. IBM’s competing Agent Communication Protocol merged into A2A in August, with IBM’s engineers joining the technical steering committee. As of February 2026, A2A has roughly 21,900 GitHub stars, about 40% of MCP’s total.

What history can tell us about how this ends

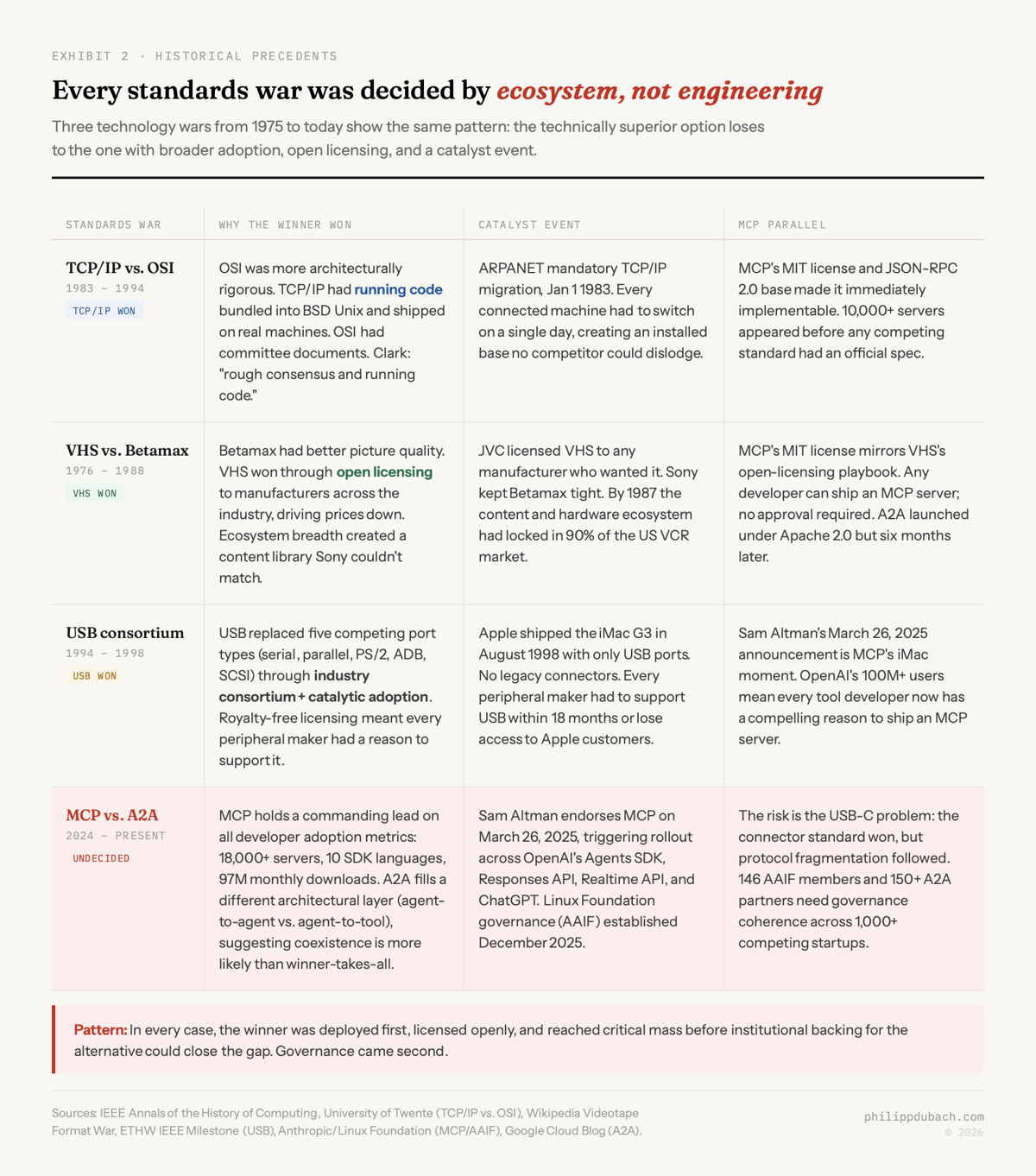

AI agent protocol wars have a consistent pattern. The winner is almost never the technically superior option. It’s the one that ships first and gets adopted before anyone can catch up.

TCP/IP and OSI are the canonical example. The OSI model, published by ISO in 1983, was architecturally more rigorous than TCP/IP’s four-layer stack. It had real institutional backing: the US Commerce Department published its GOSIP mandate in August 1988, with formal enforcement beginning in 1990. European governments followed. OSI still lost. TCP/IP won because it had running code, freely available implementations bundled with BSD Unix workstations, while OSI remained elegant theory trapped in committee processes. By 1994 the outcome was obvious. David Clark’s IETF motto captures why:

We reject kings, presidents and voting. We believe in rough consensus and running code.

VHS versus Betamax is the other lesson people cite, often incorrectly. Betamax had better picture quality. VHS won anyway, and the usual explanation is the movie library. That’s part of it. But JVC openly licensed VHS to manufacturers across the industry, which drove prices down and built a content ecosystem Sony couldn’t match. By 1987, VHS held 90% of the US VCR market. Sony conceded in 1988 by manufacturing VHS players. Ecosystem breadth, once established, creates a gravitational field that technical superiority alone can’t escape.

USB is a more recent example with a twist. The consortium, Compaq, DEC, IBM, Intel, Microsoft, NEC, Nortel, formed in 1994 and shipped USB 1.0 in January 1996. Adoption was sluggish until Apple shipped the iMac G3 in August 1998 with only USB ports, forcing the entire peripheral industry to follow. One player is so central to the ecosystem that their adoption forces everyone else’s hand. OpenAI adopting MCP in March 2025 is MCP’s iMac moment.

But USB also offers a warning. USB-C’s physical connector won universally, then the underlying protocol fragmented. The same connector could carry anything from USB 2.0 to USB4, 5W to 240W of power, depending on what you plugged together. The EU eventually legislated convergence through its Radio Equipment Directive, which took effect December 28, 2024. A standard can win and still fragment when nobody governs the details.

What now?

The Linux Foundation’s Agentic AI Foundation (AAIF), launched December 9, 2025 with Anthropic, OpenAI, and Block as co-founders, now has 146 member organizations, including JPMorgan Chase, American Express, Autodesk, Red Hat, and Huawei. A2A has its own Linux Foundation governance body. MCP sits within AAIF. Both are under the same umbrella, but they’re not the same project.

This is the governance structure you typically see after a standards war has been decided in principle but before the implementation details have been hammered out. Think of the W3C in 1994, not the W3C in 1998. For anyone making architectural decisions right now, the practical question isn’t MCP versus A2A. Most major enterprise platforms already support both. Salesforce, SAP, IBM, Microsoft, and AWS have committed to both. The question is sequencing and depth.

ISG analyst David Menninger put it clearly: “MCP first for sharing context; then A2A for dynamic interaction among agents.” That’s the sequence I’d follow. MCP is the more mature protocol with the larger server ecosystem. The 10,000+ existing servers represent integration work that doesn’t need to be rebuilt. Start there. Layer A2A on top when your use cases require multi-agent coordination across organizational boundaries, supply chain, cross-platform orchestration, which is exactly where the Tyson Foods and Adobe deployments have landed.

MCP security deserves a separate conversation. Astrix Security’s research found that 53% of MCP servers rely on static credentials rather than OAuth. A critical vulnerability in the mcp-remote npm package (CVE-2025-6514) exposed 437,000+ installations to shell injection. TCP/IP had its share of early-stage security problems in the 1980s, so I’m not calling this fatal. But these are real vulnerabilities, and they will cause real incidents before the posture matures.

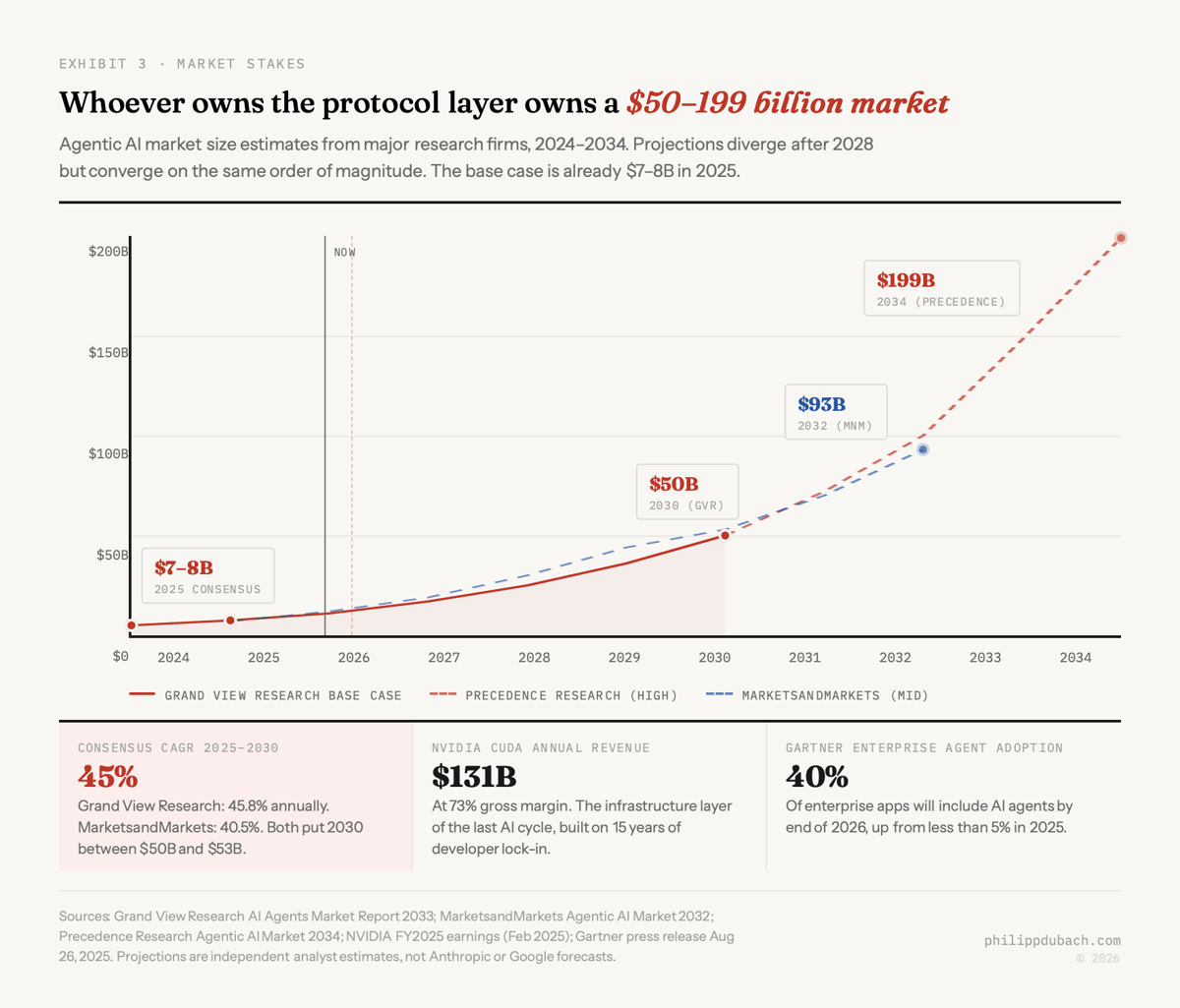

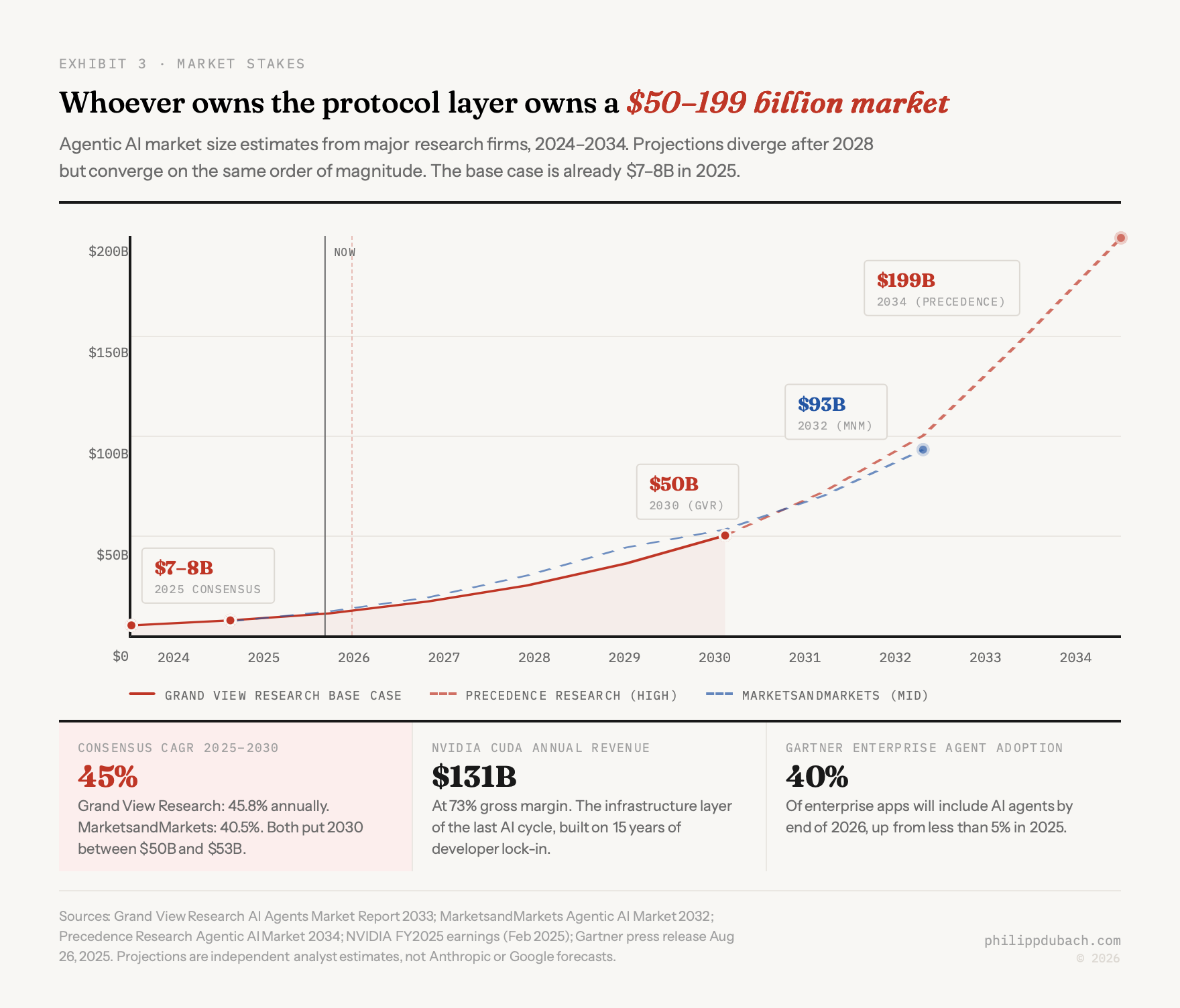

Multiple analyst firms converge on an agentic AI market of roughly $7–8 billion in 2025, growing at 40–50% annually, with projections ranging from $50 billion by 2030 to $199 billion by 2034. NVIDIA’s CUDA is the comparison that matters: 4 million developers, 15 years of compounding library investment, and switching costs that produce $130.5 billion in annual revenue at 73% gross margins. MCP’s 97 million monthly downloads aren’t CUDA yet. But the trajectory points the same direction.

My best guess (and I want to be clear it’s a guess): MCP becomes the infrastructure layer, A2A becomes the coordination layer, much as TCP handles transport while HTTP handles application-layer communication. Different floors of the same building. The question remains whether 146 AAIF members can hold coherent standards against the competitive pressure of over 1,000 active agentic AI startups, each with economic incentives to differentiate.