Investing at the Edge of Knowledge, Part 1

David Ricardo made a fortune buying British government bonds four days before the Battle of Waterloo. He was not a military analyst. He had no basis to compute the odds of Napoleon’s defeat, or victory, or any of the ambiguous outcomes in between. But he understood something that most of his contemporaries did not: the nature of his own ignorance was the same as everyone else’s, the seller was desperate, competition was thin, and the pounds he’d gain if Wellington won were worth far more than the pounds he’d lose if Wellington fell.

Ricardo’s edge was not information. It was a correct assessment of what kind of not-knowing he was facing.

That distinction, between different kinds of not-knowing, is mostly absent from finance. Richard Zeckhauser, the Frank P. Ramsey Professor of Political Economy at Harvard, made it the foundation of his 2006 paper “Investing in the Unknown and Unknowable,” published in Capitalism and Society. The paper takes no derivatives and runs no regressions. What it does instead is more valuable: it provides a taxonomy of not-knowing, and then shows why the category that finance theory handles worst is the one where the biggest fortunes have been made.

This is Part 1 of a five-part series that works through Zeckhauser’s framework and extends it. The goal is not a literature review. It’s an attempt to build a working vocabulary for the kind of investing that modern portfolio theory was never designed to address.

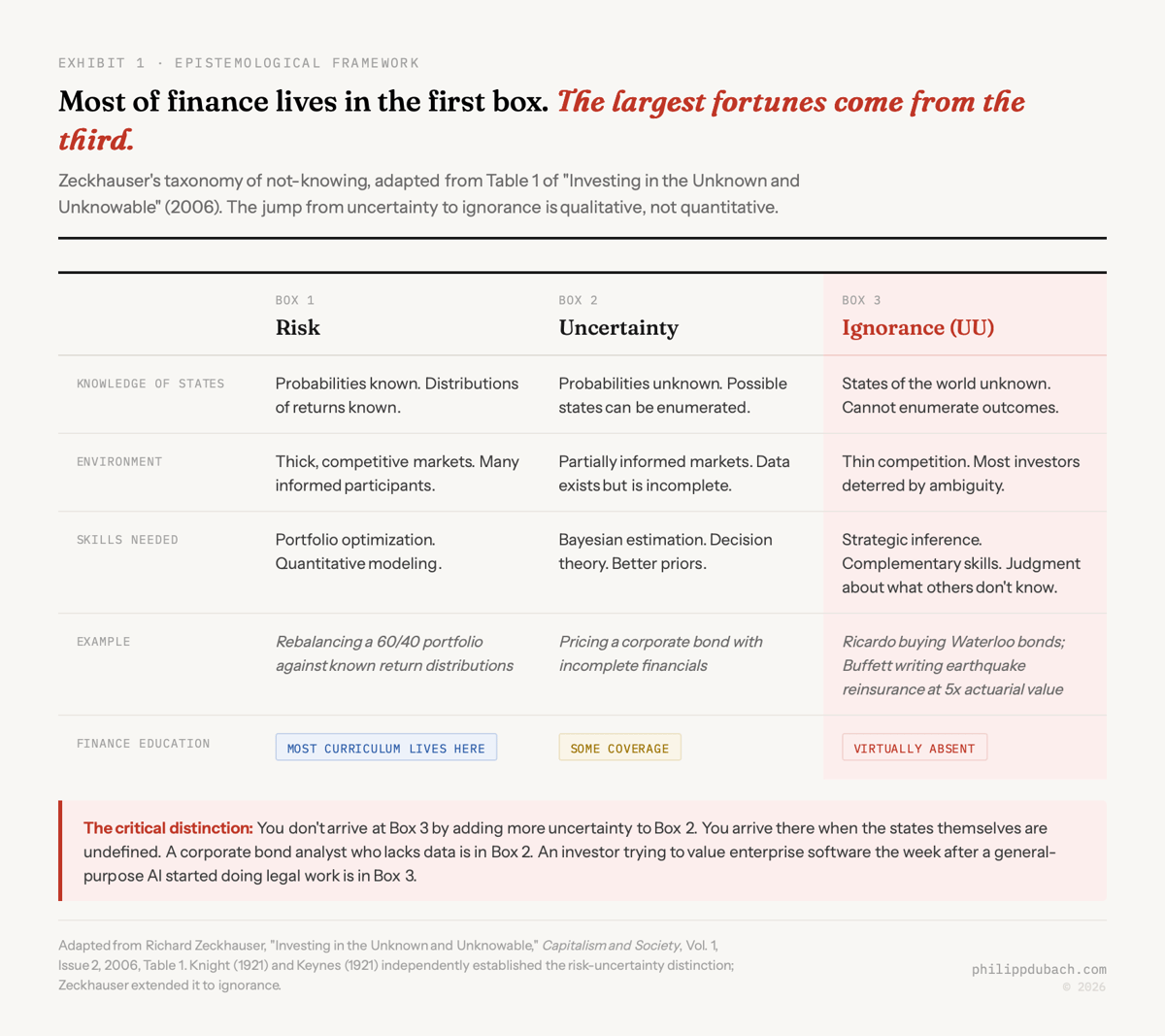

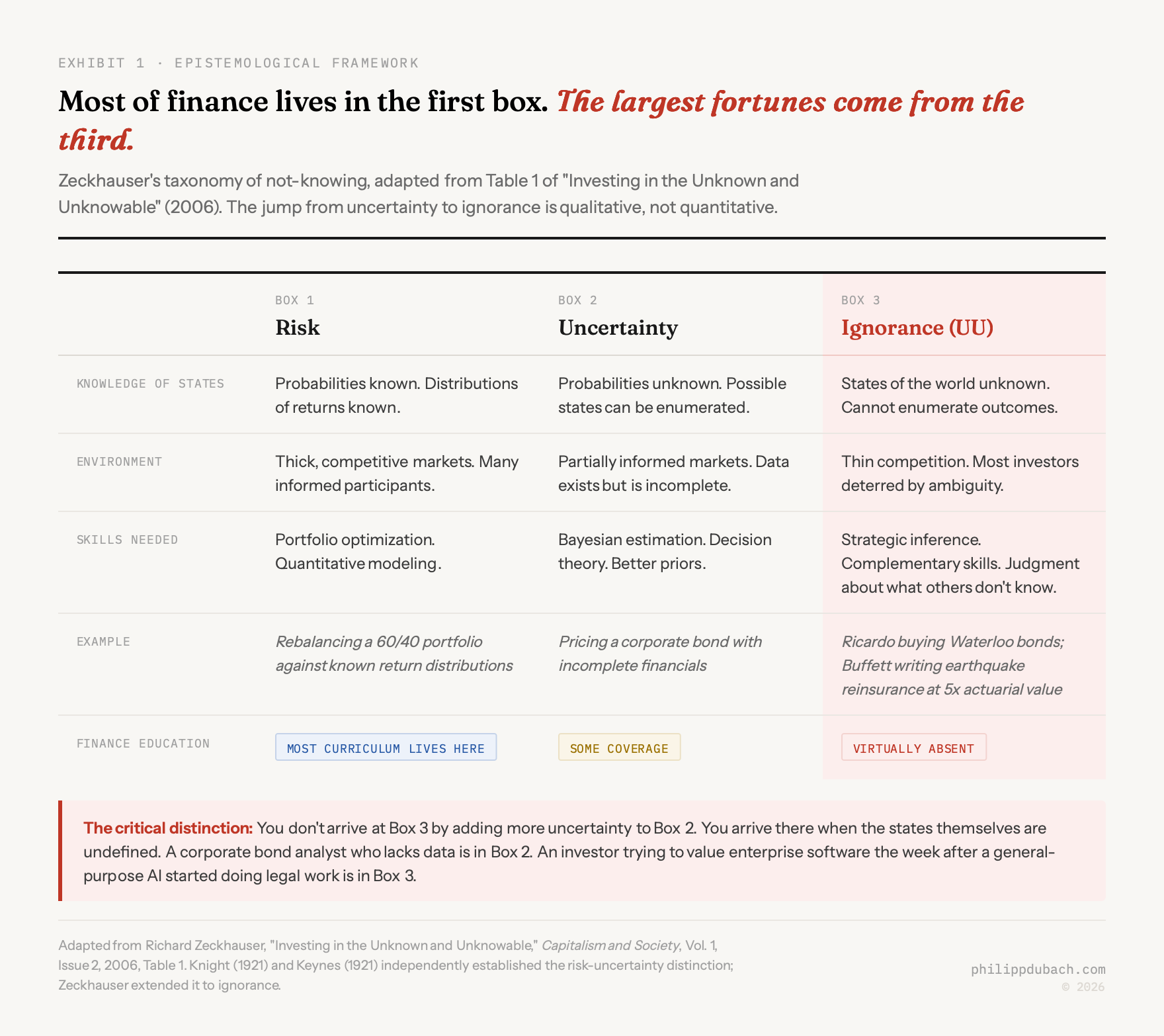

The taxonomy

Zeckhauser presents three categories of not-knowing. Each demands different skills. Each rewards a different kind of investor. And the jump between them is not a smooth gradient. It’s a cliff.

The first box is risk. Probabilities are known, distributions of returns are known, and the challenge is optimization. This is the world of the capital asset pricing model, of mean-variance portfolios, of the efficient frontier. You hold a 60/40 stock-bond portfolio and rebalance quarterly. The math is clean. The Nobel Prizes were awarded. Finance education lives here.

The second box is uncertainty. You can identify the possible states of the world, but you can’t assign reliable probabilities. A corporate bond analyst looking at incomplete financials knows the company might default or might not, knows the recovery rate might be 40 cents or 60 cents, but can’t compute a precise probability for either. The skill that pays here is Bayesian estimation: forming the best prior you can from limited data, updating as information arrives, and having the temperament to act on imperfect beliefs. This is harder than Box 1, but it’s still recognizable territory. Decision theory was built for it.

The third box is ignorance. Zeckhauser abbreviates it UU: unknown and unknowable. Here, even the identity of possible future states is undefined. You don’t have a distribution to estimate because you can’t enumerate what you’re estimating over. The question isn’t “what’s the probability of outcome X?” It’s “what even is X?” This is where Ricardo was standing at Waterloo. This is where Warren Buffett was standing in 1996 when he wrote a $1.5 billion reinsurance policy for the California Earthquake Authority at a premium far above actuarial estimates, coverage that the capital markets had failed to place. The New York financial community couldn’t model the risk. Buffett’s insight was that nobody could, that the Authority was not better informed about seismic activity than he was, and that the price was absurdly favorable given the symmetry of ignorance.

The boxes are not a spectrum. You don’t get from Box 2 to Box 3 by adding more uncertainty. You get there when the state space itself is undefined. In Box 2, you might not know whether a company will default, but you know that “default” and “no default” are the relevant categories. In Box 3, you don’t even know the categories. That’s a qualitative difference, not a quantitative one.

Why finance forgot the third box

The strange thing is that the third box was identified a century ago. Twice, independently, in the same year.

Frank Knight published Risk, Uncertainty and Profit in 1921. His central argument was that entrepreneurial profit is compensation for bearing true uncertainty: situations where probabilities cannot be meaningfully calculated. Risk, in Knight’s framework, is insurable. Uncertainty is not. The distinction is not about the degree of confidence in your estimate. It’s about whether the concept of a probability estimate even applies.

John Maynard Keynes published A Treatise on Probability the same year. His angle was different but convergent. Keynes introduced the idea of the “weight of evidence”: a thin body of evidence yields low weight even when the point estimate looks reasonable. In his 1937 Quarterly Journal of Economics article, he made the distinction explicit: “By ‘uncertain’ knowledge, let me explain, I do not mean merely to distinguish what is known for certain from what is only probable. The game of roulette is not subject, in this sense, to uncertainty.” Roulette is risky. The future of interest rates, the price of copper twenty years out, the obsolescence of a technology: these are uncertain in the deeper sense. The distinction mattered to Keynes, and it should matter to anyone building a portfolio.

Both arguments lost. The discipline moved toward formalization, and formalization required calculable probabilities. The efficient markets hypothesis, rational expectations, CAPM, Black-Scholes: all of these live in Box 1 or assume that Box 2 can be reduced to Box 1 with sufficient data and computing power. This isn’t a criticism of these models within their domain. They’re brilliant engineering for the problems they were designed to solve. It’s a claim about the boundaries of that domain, and about how much of real-world investing sits outside it.

LeRoy and Singell (1987) offered a provocative reinterpretation in the Journal of Political Economy: Knight’s real distinction, they argued, was about insurability, not probability. Uncertainty describes situations where insurance markets collapse because of moral hazard and adverse selection, not simply because probabilities are subjective. This reading is more radical than the standard one. It says the breakdown isn’t epistemic (we don’t know enough) but structural (the market itself can’t price the risk). That structural breakdown is precisely what happened in 1996 when Wall Street couldn’t write the California earthquake policy, and again in 2025 when insurance markets in parts of the American Southeast and West simply stopped functioning.

Kay and King picked up this thread in their 2020 book Radical Uncertainty, arguing that the conflation of risk and uncertainty has caused systematic mismanagement across finance and policy. Their prescription is “narrative reasoning” rather than probabilistic optimization for decisions facing genuine uncertainty. I’m not sure narrative reasoning is sufficient, but I’m confident that probabilistic optimization is insufficient. The honest position is somewhere in between, and Zeckhauser’s framework gives you the vocabulary to think about where.

Bewley (2002) formalized the problem differently. Working from a 1986 Cowles Foundation paper, he dropped the completeness axiom from expected utility theory. In standard theory, you can always rank alternatives: you prefer A to B, or B to A, or you’re indifferent. Bewley said: sometimes you simply can’t rank them. When alternatives are incomparable, sticking with the status quo is rational, not a bias. This gives mathematical expression to something practitioners know in their bones: there’s a difference between “I’m going to hold because I think the price will go up” and “I’m going to hold because I have no coherent basis for predicting what will happen and the cost of acting without a basis is higher than the cost of staying put.”

Why the third box is growing

This hundred-year-old taxonomy feels more relevant in 2026 than it did in 2006. Technological change creates entirely new categories of outcomes faster than models can absorb them. The state space itself is expanding.

I wrote recently about the SaaSpocalypse paradox: the market simultaneously pricing AI capex failure and AI destroying all enterprise software, when both cannot be true. That sell-off is a textbook example of Zeckhauser’s third box. The problem wasn’t that investors struggled to estimate the probability of known outcomes. The problem was that the outcomes themselves were undefined. What does “CRM” mean when AI agents replace human users? What does “per-seat licensing” mean when the number of seats might go to zero or might multiply by ten as agents proliferate? What does “enterprise software moat” mean when the moat was always the trained-user interface and the interface is now natural language? These aren’t questions with difficult probability estimates. They’re questions where the categories haven’t been invented yet.

Nobody in January 2026 could enumerate the states of the world for enterprise software post-Claude Cowork plugins. Not “the probabilities were hard to estimate.” The states themselves were undefined. That’s not Box 2. That’s Box 3.

And Box 3 is where the IGV software ETF fell 32% from its September peak, where hedge funds made $24 billion shorting the sector, where the RSI hit 18 (the most oversold reading in the ETF’s history), and where earnings growth continued at 17%. The disconnect between operating results and market prices is exactly what Zeckhauser’s framework predicts: when the state space is undefined, investors who require defined state spaces to make decisions leave the market. Their departure compresses prices beyond what any fundamental analysis would justify. The mispricing lives in the gap between what the asset is worth and what institutions are able to hold.

This pattern will recur. AI is not the last technology that will generate new categories of outcomes that nobody anticipated. Every time it happens, the same sequence plays out: Box 3 conditions emerge, institutions flee because their models require Box 1 or Box 2 inputs, prices overshoot, and unconstrained investors who understand the nature of their own ignorance pick up the pieces. Zeckhauser wrote his paper two decades ago. The mechanism he described has, if anything, accelerated.

The taxonomy tells you what kind of problem you’re facing. It doesn’t tell you what to do about it. That requires understanding why most investors run from Box 3, and whether running is rational. That’s Part 2 (coming soon).