Alphabet’s free cash flow is projected to fall roughly 90% in 2026. Not because the business is in trouble. Because the company has committed to spending $83–93 billion more on capital expenditure than it did last year.

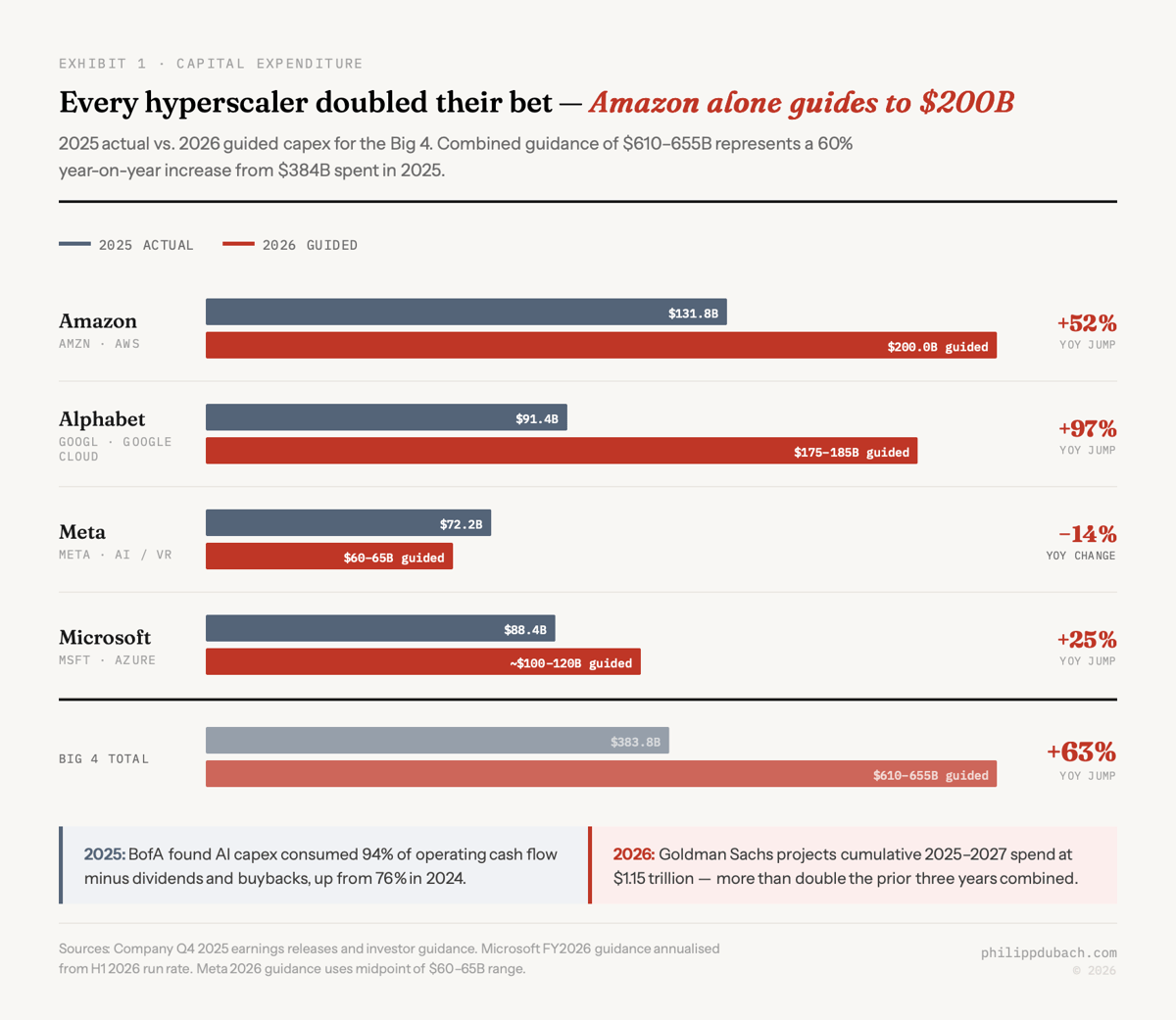

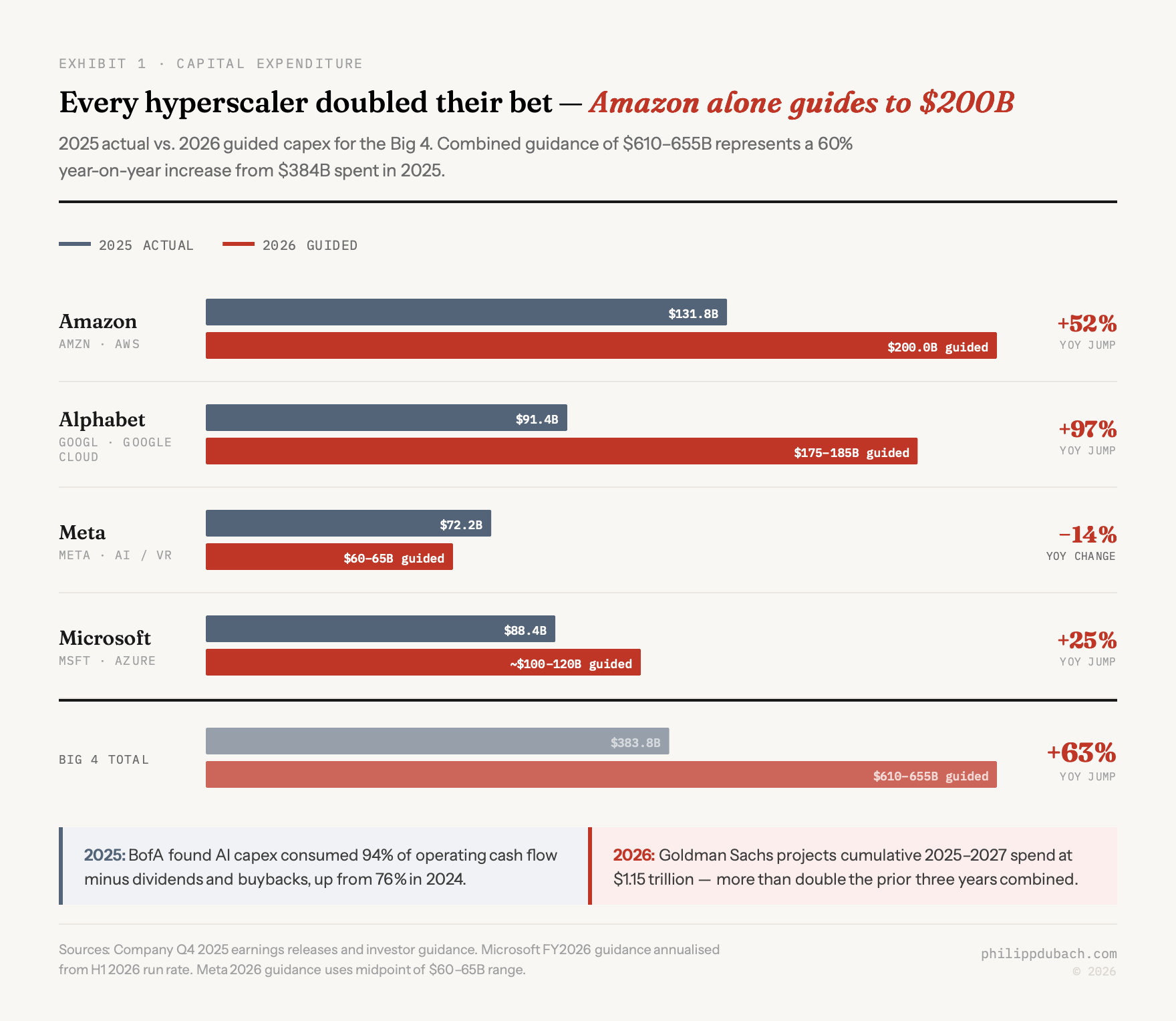

That is what $660–690 billion in AI capex looks like up close. Amazon guided to $200 billion alone. Meta’s long-term debt more than doubled to $58.7 billion to help finance its share. Goldman Sachs projects cumulative 2025–2027 spending across the Big 4 at $1.15 trillion, more than double the $477 billion spent over the prior three years combined. BofA credit strategists found this will consume 94% of operating cash flow minus dividends and buybacks.

At what revenue growth rate does any of this pay for itself? And what happens if inference costs fall 100-fold before the infrastructure is fully depreciated? We want to think about this the way a credit analyst would. Not as a technology story but as a corporate finance story. Because the numbers, assembled from earnings releases and analyst reports through February 2026, look less like a technology platform buildout and more like a leveraged buyout of the future.

The LBO

An LBO thesis goes like this: we borrow heavily today, acquire an asset, generate enough cash flow to service the debt, and eventually sell or refinance at a profit. The bet works if the returns from the acquired asset exceed the cost of capital. It fails if the asset underperforms, the cost of capital rises, or the timeline extends beyond what the capital structure can absorb.

The hyperscaler capex thesis has the same structure, substituting “equity” and “operating cash flow” for debt. Each company is telling shareholders: we will deploy enormous capital today, accept near-zero or negative free cash flow for 18 to 36 months, and recoup that investment through AI revenue growth. Sundar Pichai put the bull case plainly at Alphabet’s Q2 2024 earnings:

The risk of underinvesting is dramatically greater than the risk of overinvesting for us here.

At five-year straight-line on $175 billion in Alphabet capex, you get $35 billion in annual depreciation. Add a conservative 10% cost of capital on the incremental investment, and the hurdle gets harder still. For the full $690 billion in 2026 hyperscaler capex, the annual depreciation burden alone approaches $115–140 billion at five-year lives. That is before interest, power, operations, or the cost of next year’s upgrade cycle.

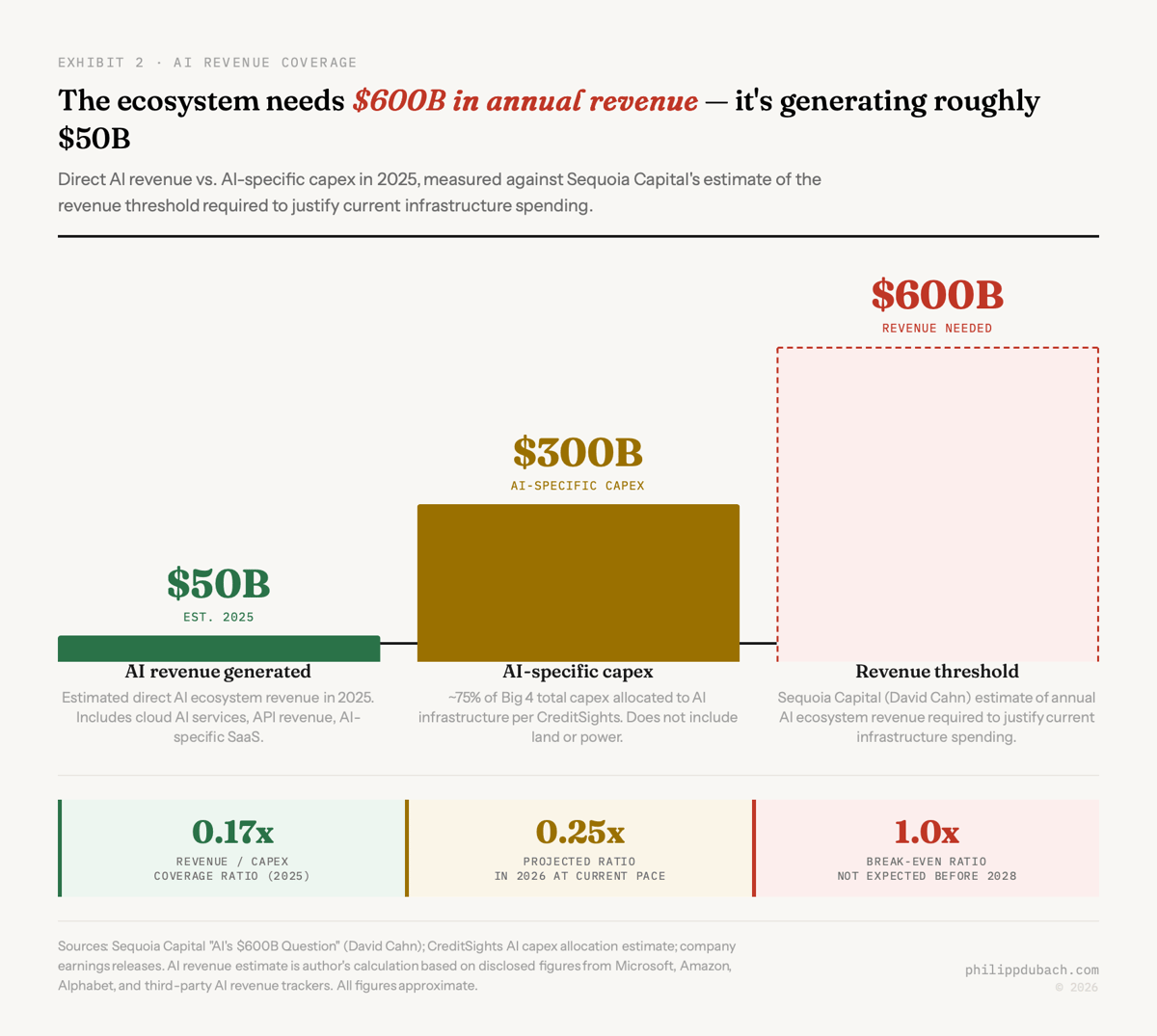

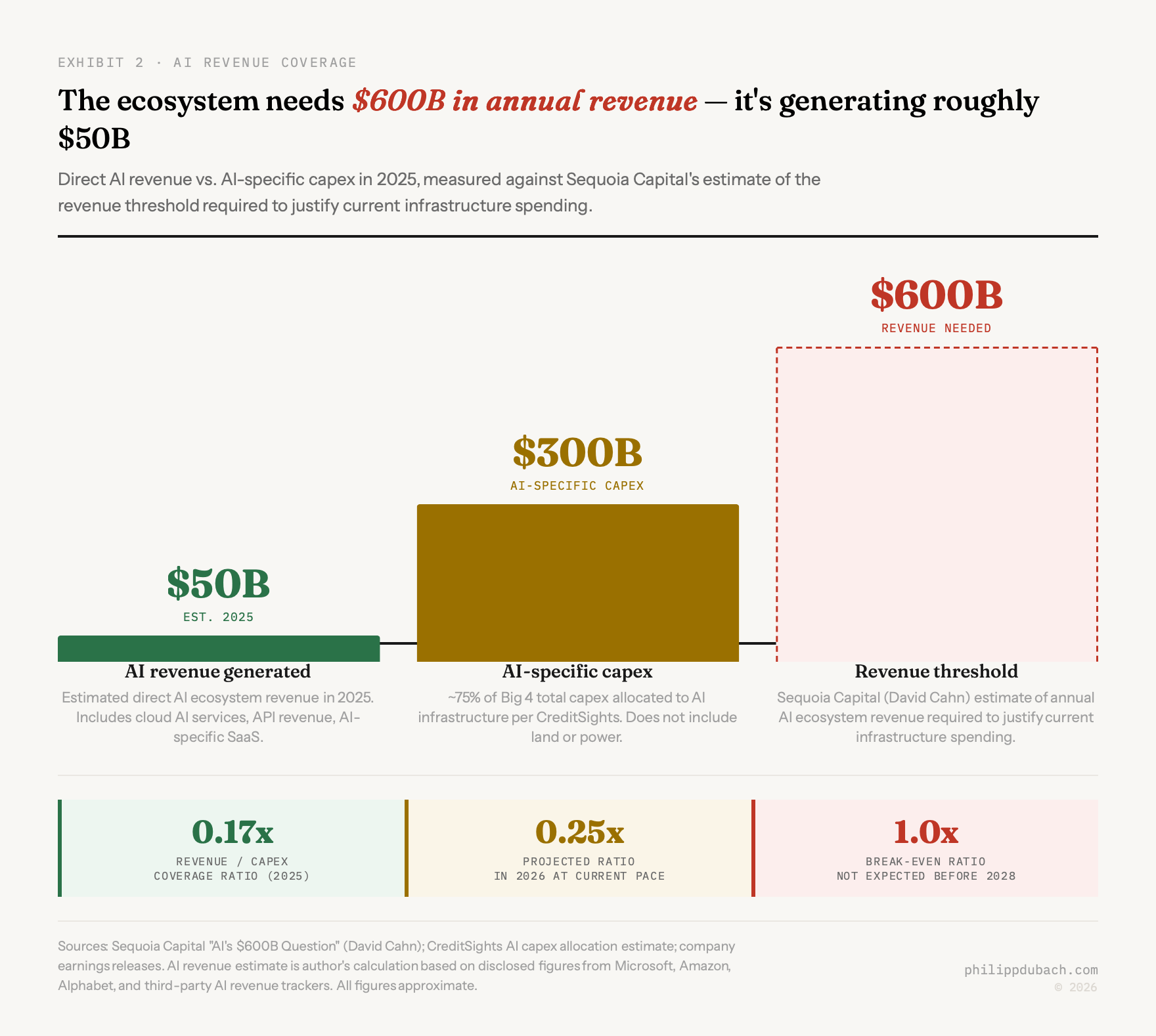

The revenue side of this ledger is far smaller than the capex side. Rough estimates place direct AI revenue across the ecosystem at $40–60 billion in 2025, against AI-specific capex of roughly $300 billion. Coverage ratio: approximately 0.15x. Sequoia’s David Cahn calculated that the AI ecosystem needs to generate $600 billion in annual revenue to justify current infrastructure spending, against perhaps $50–100 billion it is actually generating. By 2026, with AI revenue perhaps reaching $80–120 billion and AI capex at $450 billion, the ratio improves to roughly 0.25x. Still not a business.

What would have to be true

The spending is not obviously irrational. The bull case is worth taking seriously: the right moment to build infrastructure for a platform shift is before the platform fully exists. Railroads were overbuilt. Fiber was overbuilt. Both excesses funded genuinely useful infrastructure that later ran at capacity. If AI becomes the general-purpose technology that most proponents claim, the AI infrastructure being deployed today could look like the most prescient investment since Standard Oil.

But that argument requires you to believe some very specific things about revenue growth that have not yet materialized. The 2025–2030 revenue ramp embedded in current capex implies AI revenue growing from roughly $60 billion today to somewhere between $600 billion and $2 trillion by 2030, depending on which bullish scenario you pick. Bain calculates that even under the most aggressive adoption scenario, AI generates $1.2 trillion in revenue, against the $2 trillion the spending requires to break even.

MIT’s Daron Acemoglu, who won the 2024 Nobel Prize in Economics, projects AI will deliver a total GDP increase of just 1.1–1.6% over ten years: roughly a 0.05% annual productivity gain. Only about 5% of economic tasks, he estimates, are cost-effectively automatable at current prices. Goldman Sachs’ Jim Covello made a similar argument in a June 2024 note: “Replacing low-wage jobs with tremendously costly technology is basically the polar opposite of the prior technology transitions I’ve witnessed in my thirty years of closely following the tech industry.” Neither of these is a fringe view. If either is roughly right, the revenue scenarios baked into current capex budgets do not close. And yet the same market is destroying software stocks because AI adoption is supposedly too strong. Both readings cannot be true.

Dario Amodei, who is himself building the infrastructure, put it very bluntly on the Dwarkesh Podcast in February 2026: “If my revenue is not $1 trillion, if it’s even $800 billion, there’s no force on Earth, there’s no hedge on Earth that could stop me from going bankrupt if I buy that much compute.” He was describing his own spending discipline relative to peers. The companies spending three times as much as Anthropic apparently believe they have found the hedge he could not.

Depreciation time bomb

One risk most analysis underweights: AI hardware obsoletes faster than any previous infrastructure cycle.

Hyperscalers have extended server useful lives from four to five and six years, saving billions in annual depreciation. But Amazon reversed course: in Q4 2024 it took a $920 million charge to early-retire certain servers and networking equipment, then effective January 1, 2025 it shortened useful lives for a subset of servers from six to five years, citing “the increased pace of technology development, particularly in the area of artificial intelligence,” a decision expected to reduce 2025 operating income by a further $700 million. Jensen Huang, not a man known for underselling his own products, said of H100 GPUs once Blackwell shipped: “You couldn’t give Hoppers away.” Nvidia now releases new architectures annually, where it previously released them every two years.

Michael Burry, who spent 2005 correctly modeling the mortgage market’s hidden risks, estimates that hyperscalers will understate depreciation by roughly $176 billion in aggregate between 2026 and 2028, causing them to overreport earnings by more than 20%. I have no idea whether Burry is right on the specific number. But the direction is correct. If the useful life of a Blackwell GPU is closer to three years than five because Rubin replaces it in 2027, the depreciation math gets far worse.

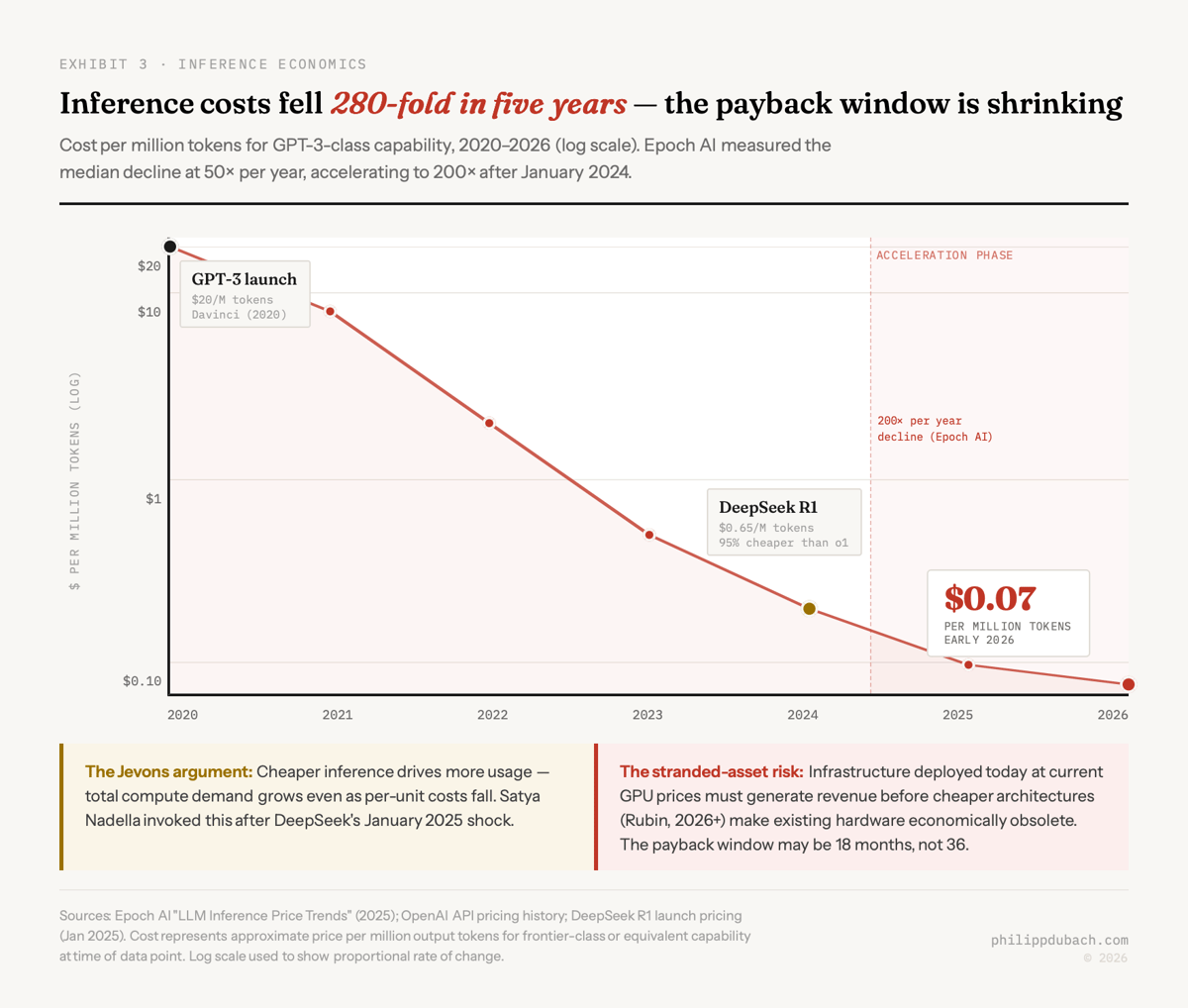

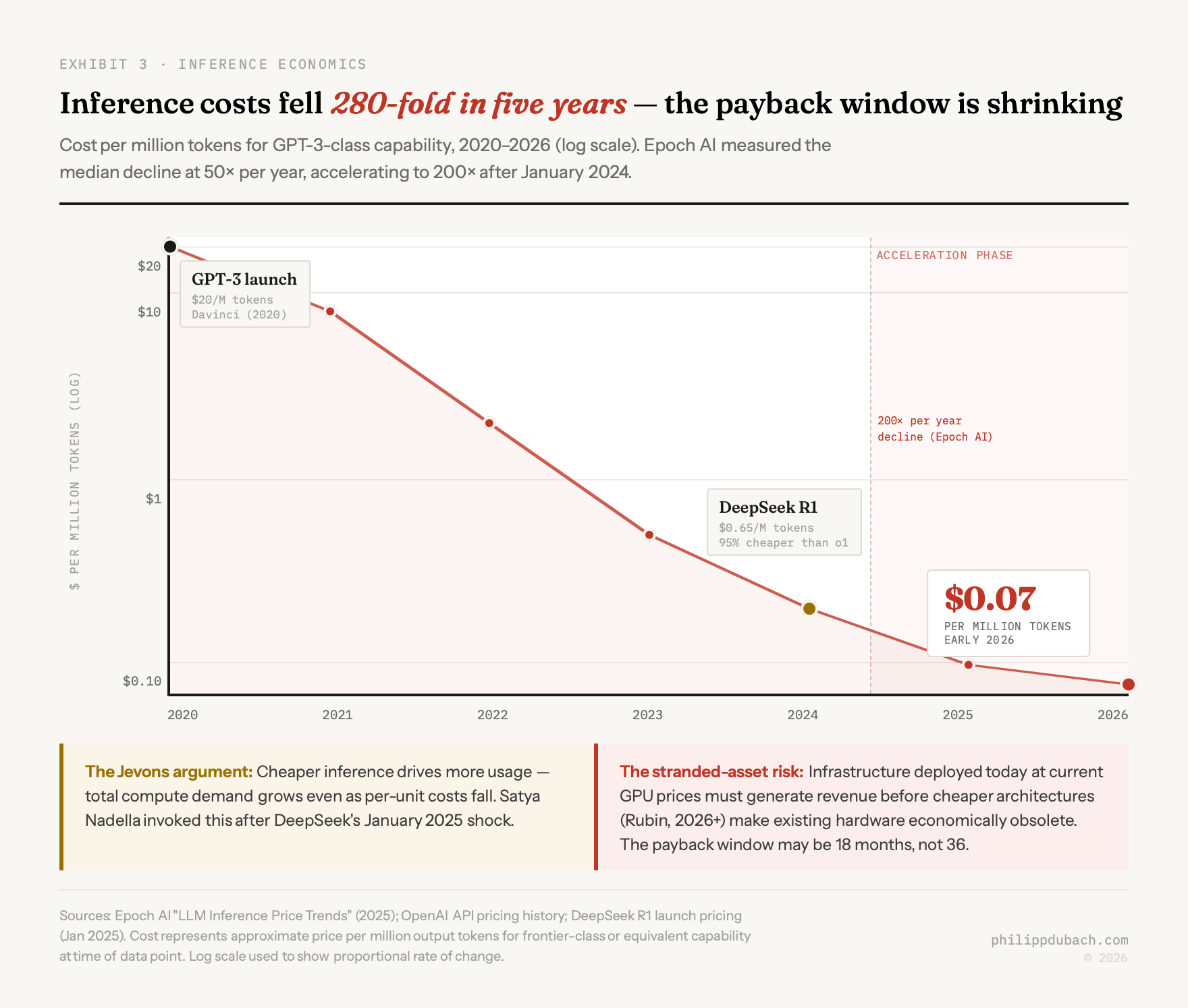

Epoch AI measured inference costs falling at a median 50 times per year, accelerating to 200 times per year after January 2024. GPT-3-era processing cost around $20 per million tokens at launch in 2020. By early 2026, models of comparable capability cost roughly $0.07 per million tokens. That is a roughly 280-fold decline over five years, and there is no obvious reason for it to stop.

The hyperscaler response to this is Jevons, an argument I explored in January: cheaper inference will explode demand, and the total compute consumed will far exceed what efficiency gains removed. They may be right. But the timing matters. Infrastructure being deployed today, at today’s GPU prices, needs to generate enough revenue before the next architecture cycle renders it economically obsolete. The payback window is not 36 months. It may be 18.

The hyperscaler response to this is Jevons, an argument I explored in January: cheaper inference will explode demand, and the total compute consumed will far exceed what efficiency gains removed. They may be right. But the timing matters. Infrastructure being deployed today, at today’s GPU prices, needs to generate enough revenue before the next architecture cycle renders it economically obsolete. The payback window is not 36 months. It may be 18.

Arms race logic

Mark Zuckerberg acknowledged the possibility of an AI bubble “definitely” in September 2025, then spent $72 billion anyway. This is not irrationality. It is game theory. If AI really does create winner-take-most outcomes, slowing down is a bet that the platform shift is smaller than your competitors believe. Most boards are not willing to make that bet. So everyone keeps spending, and as I wrote last week, every bulge bracket bank agrees they should.

But the same logic drove WorldCom’s Bernie Ebbers. The same logic drove Global Crossing. The specific claim driving the 1990s telecom bubble was that internet traffic was “doubling every 100 days.” It was false: researcher Andrew Odlyzko traced it to misleading WorldCom/UUNET claims, and actual traffic doubled roughly once per year. By 2001, only 5% of installed fiber capacity was in use. The infrastructure eventually ran at capacity; it just took a decade and several dozen bankruptcies to get there.

Howard Marks published a December 2025 memo asking, with characteristic deliberateness, “Is It a Bubble?” He noted hyperscalers’ capex was outpacing revenue momentum and lenders were sweetening terms to keep deal flow alive. J.P. Morgan projects $300 billion in investment-grade bonds for AI data centers in 2026 alone. That is the same fragility that destroyed the telecom builders: cheap debt financing infrastructure before anyone has proved the revenue exists to service it.

Without AI spending, Pantheon Macroeconomics calculated in February 2026, U.S. corporate capex would currently be negative. The entire infrastructure investment story depends on this cycle continuing: total U.S. GDP grew just 1.4% annualized in H1 2025, and AI-related investment accounted for essentially all of it.