I bet there is another new architecture to find that is gonna be as big of a gain as transformers were over LSTMs.

Sam Altman, the CEO of the company most invested in the transformer is telling a room of students it isn’t the final form. So what comes after the transformer? He’s probably right that something will, and the evidence is no longer anecdotal. Several recent papers have proved that the transformer’s worst properties are structural, not engineering problems to be fixed with better data or more compute, but mathematical lower bounds.

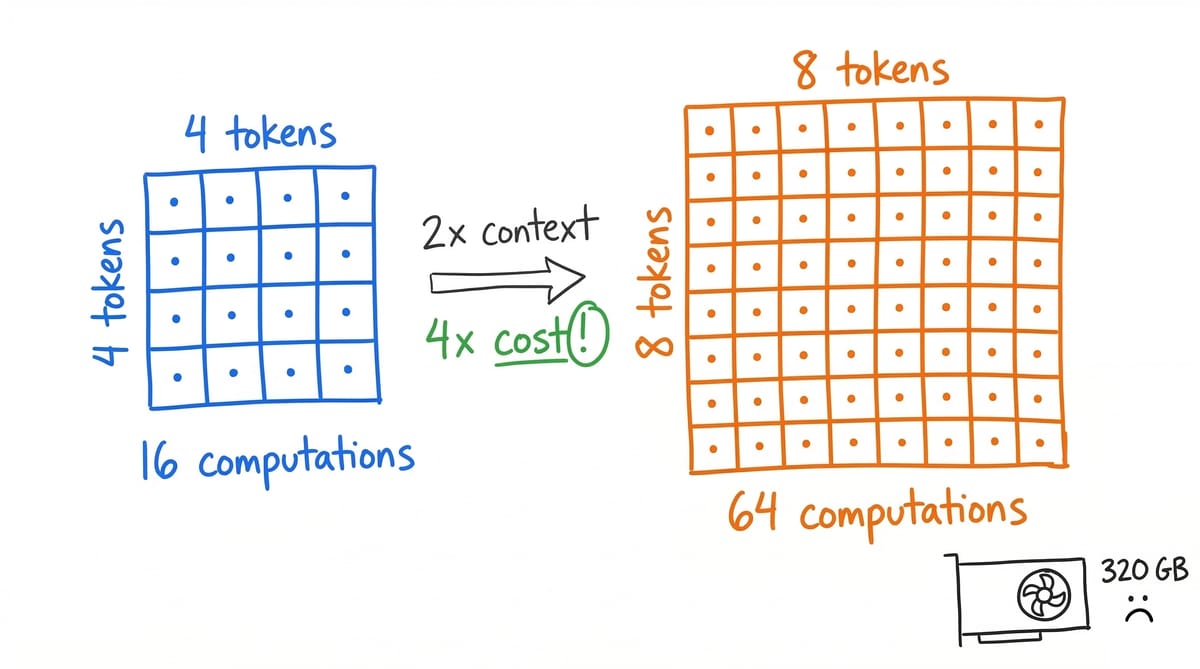

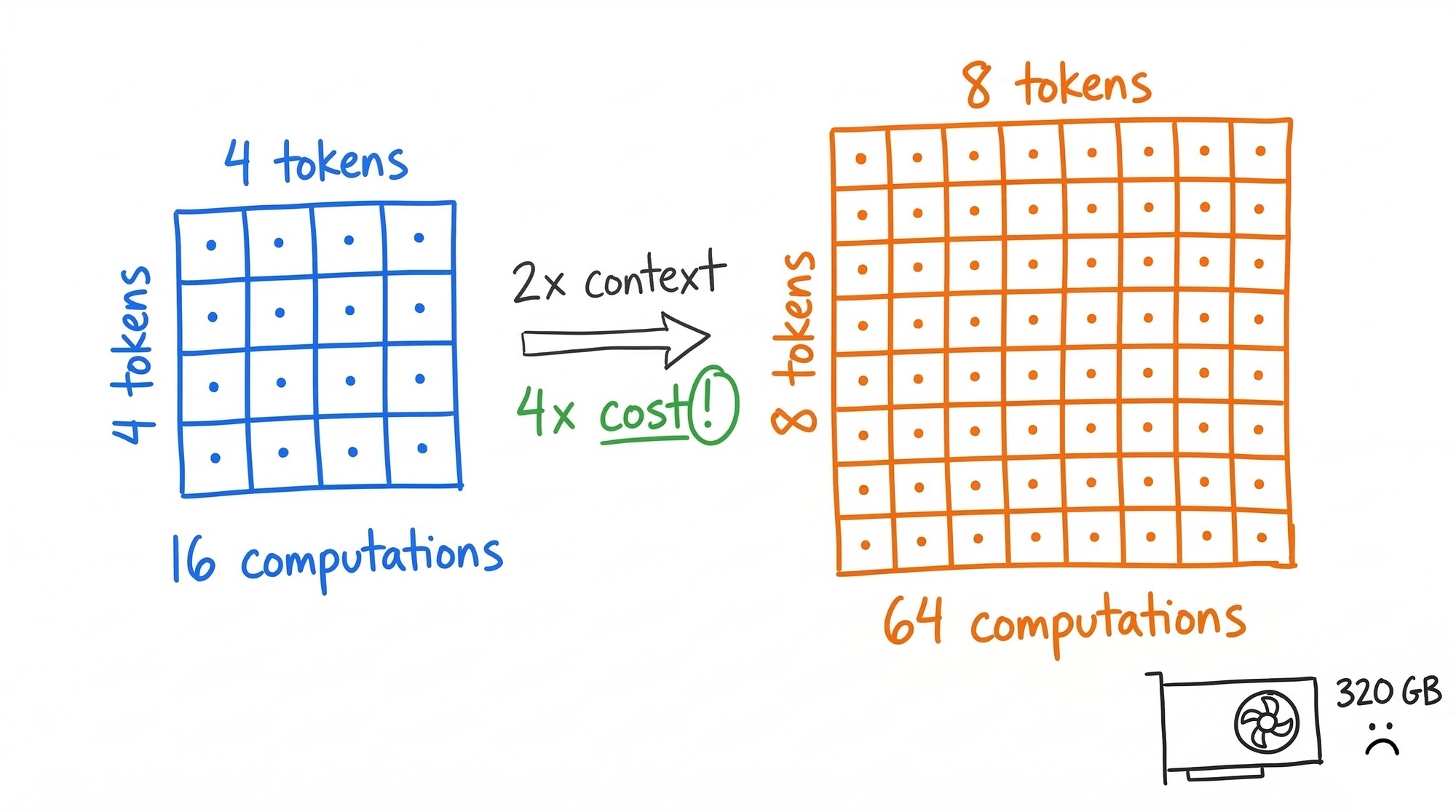

The transformer, born from the 2017 paper “Attention Is All You Need,” took us from barely-coherent GPT-2 to GPT-4 in five years. An extraordinary run. But Duman Keles et al. proved that O(n²) attention complexity isn’t an implementation detail. It’s a necessary lower bound unless a foundational conjecture in complexity theory turns out to be wrong. Double the context, quadruple the cost. The KV cache for a 70B model at one-million-token context eats roughly 320 GB of GPU memory. Most hardware can’t hold it.

The problems run deeper than compute costs. Kalai and Vempala proved that any calibrated language model must hallucinate at a certain rate. A 2025 follow-up goes further: no computable LLM can be universally correct on unbounded queries. Not fixable with better training data. Not fixable with RLHF. A statistical property of how these models generate text.

On reasoning: Dziri et al. showed transformers collapse multi-step reasoning into pattern matching. Performance drops exponentially as task complexity rises. GPT-4 gets 59% on 3-digit multiplication. Chowdhury proved the “lost in the middle” problem, models performing 20-30% worse on information buried mid-context, is a geometric property of the architecture itself. Present at initialization already, before any training occurs.

These are theorems. The architecture that runs every frontier AI system has a ceiling, and the ceiling is proved.

The post-transformer stack is already in production

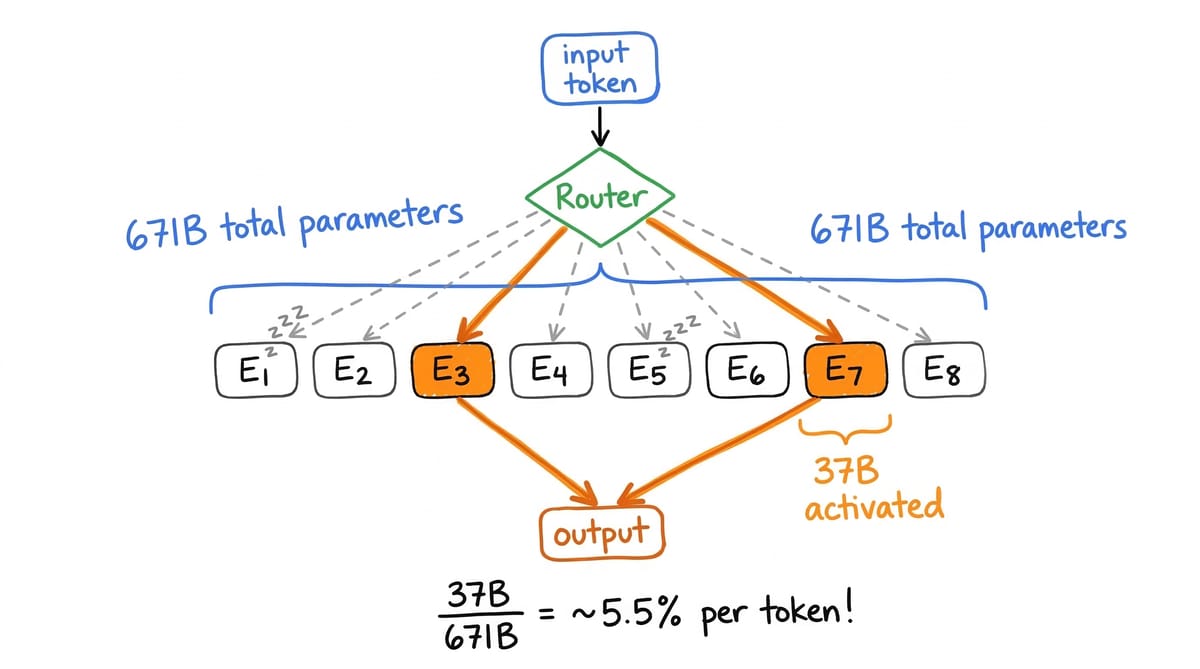

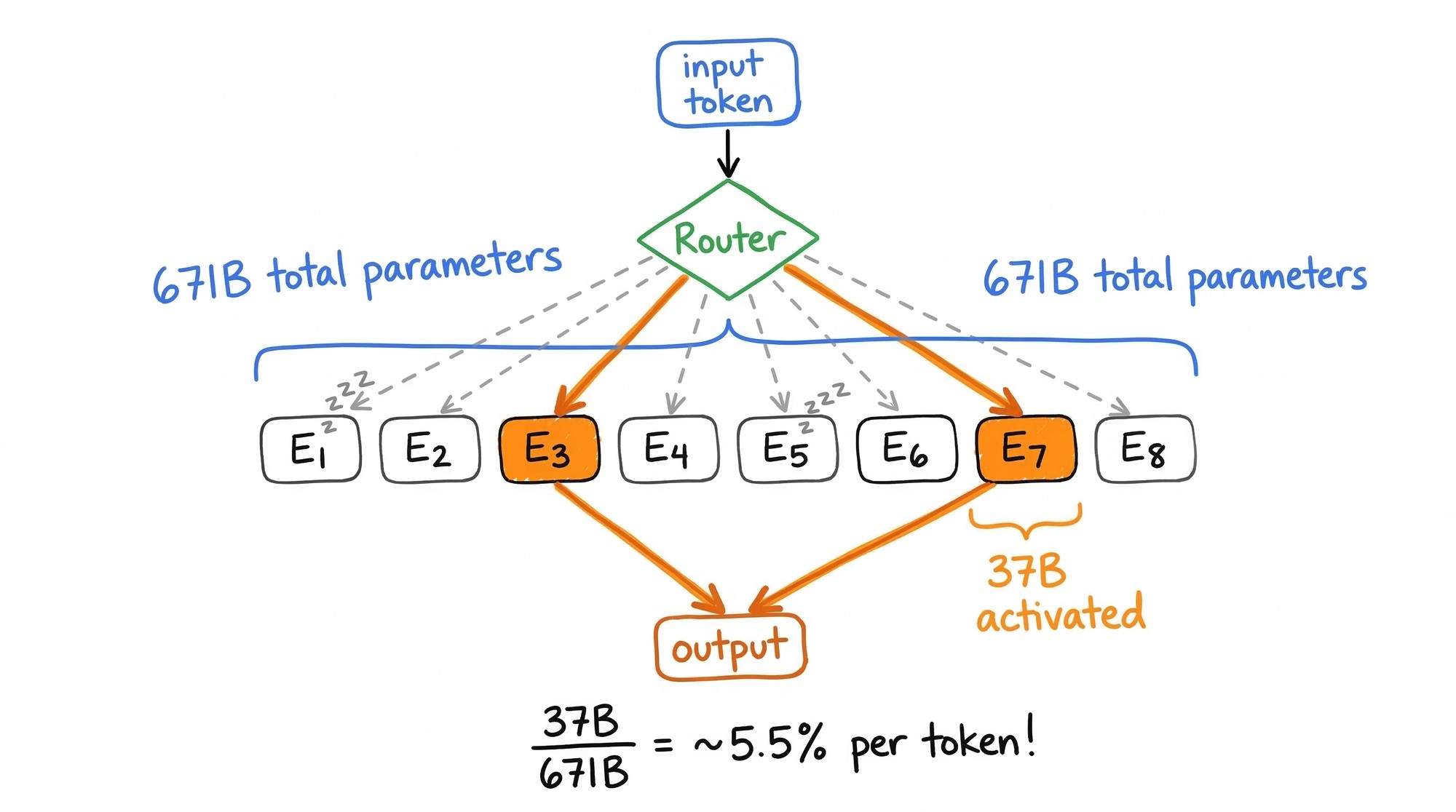

A survey by Fichtl et al. checked the top 10 models on every major benchmark. Zero were non-transformer. The transformer is still winning on the leaderboards. But the field is moving toward hybrid architectures. Over 60% of frontier models released in 2025 already use Mixture of Experts. DeepSeek-V3 has 671B total parameters but activates only 37B per token. It trained for 2.788 million H800 GPU hours, a fraction of what a comparable dense model would require, and matched frontier closed-source performance. By late 2025, DeepSeek-V3.2 reportedly hit GPT-5-level performance at 90% lower training cost. MoE doesn’t replace the transformer. It changes the economics so radically that it’s arguably the single biggest practical advance since the original architecture.

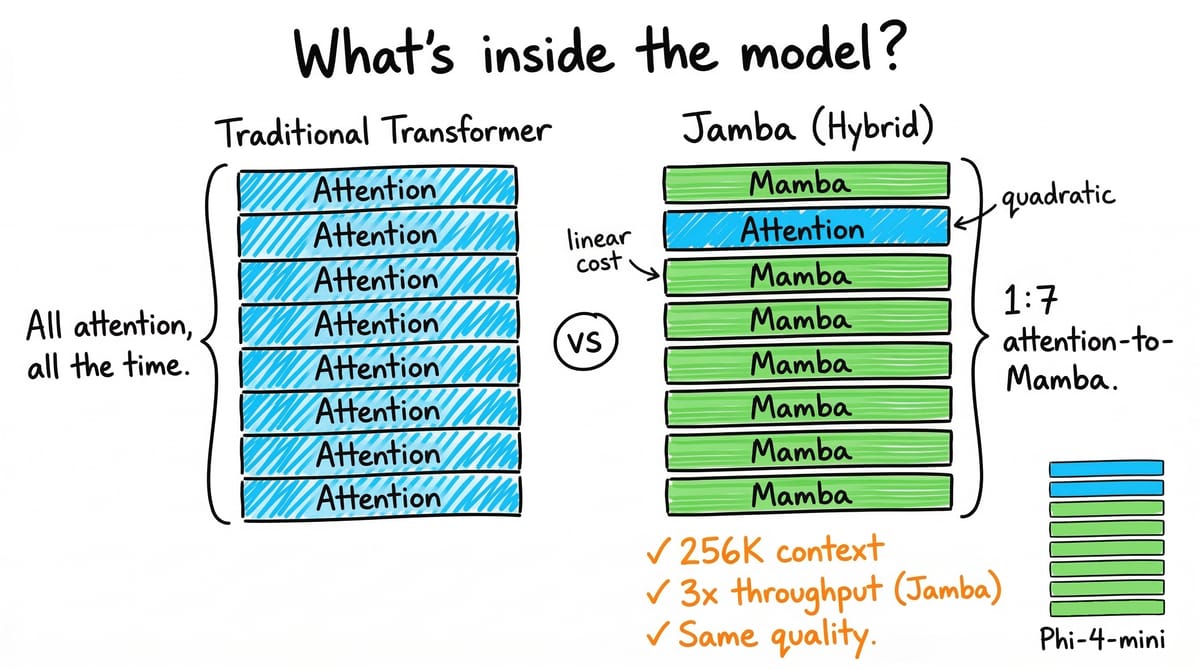

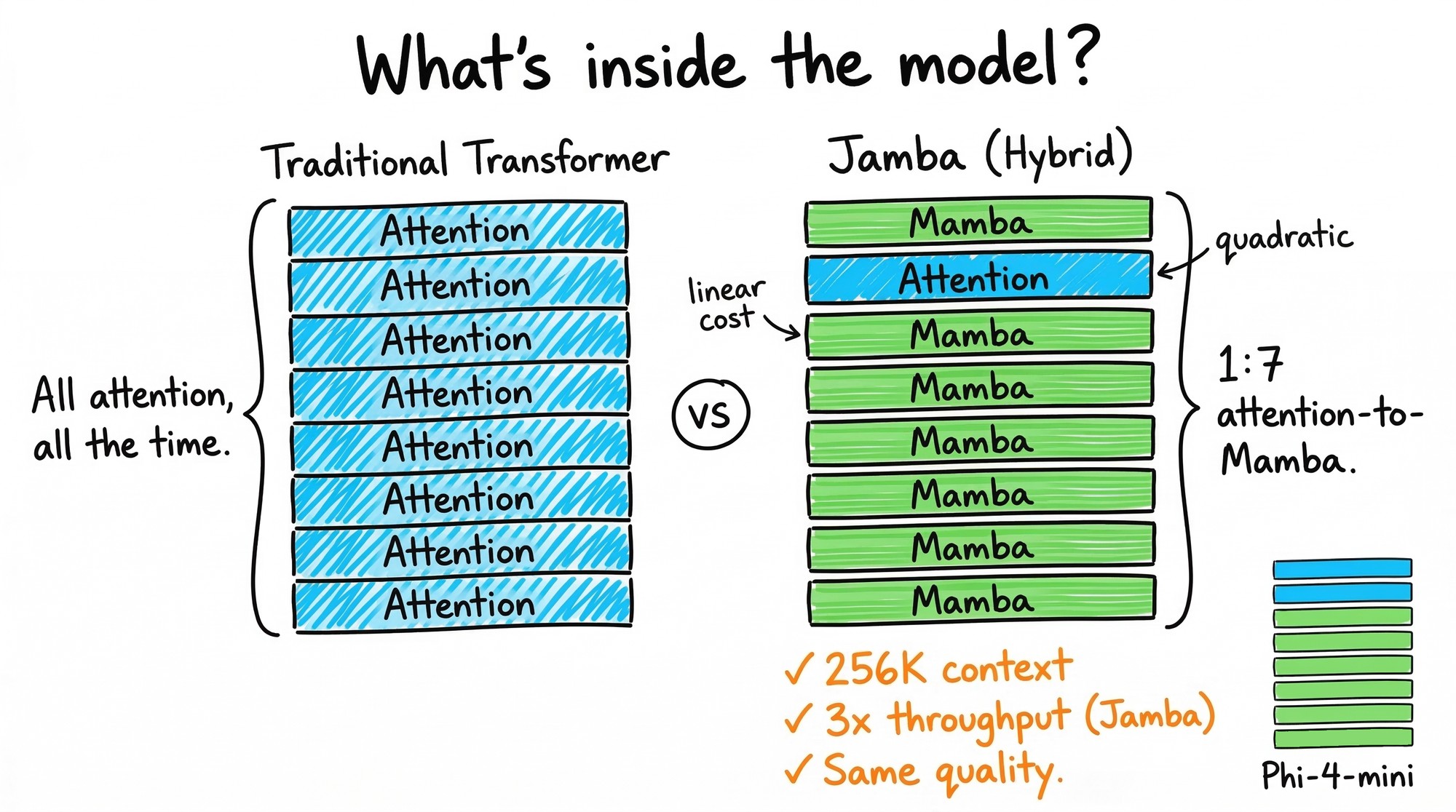

The more interesting part is what happens when you blend attention with state space models. Gu and Dao (2024) proved SSMs and attention are mathematically dual: two views of the same computation. That theoretical result is showing up in production. AI21’s Jamba runs a 1:7 attention-to-Mamba ratio and gets 256K context at 3x throughput over Mixtral. Alibaba’s Qwen3-Next shipped the first top-tier model with a hybrid backbone: Gated DeltaNet for linear attention at a 3:1 ratio with full attention. Microsoft’s Phi-4-mini-flash-reasoning is 75% Mamba layers with 10x throughput at 2-3x lower latency.

Diffusion language models are the wild card. LLaDA, the first 8B-parameter diffusion LLM, treats text generation as denoising rather than sequential token prediction. It matches Llama3-8B and does something no autoregressive model can: it solves the “reversal curse,” outperforming GPT-4o on reversal tasks. Gemini Diffusion hit 1,479 tokens per second. Over 50 papers on diffusion LLMs appeared in 2025. If parallel generation works reliably at scale, inference economics change completely.

Alman and Yu proved there are tasks where every subquadratic alternative has a fundamental theoretical gap. That’s the strongest mathematical argument for why hybrids, not clean replacements, are what comes next.

The search is no longer human-speed

The part of this I find most interesting is the recursion. AI systems are now running the search for their own architectural successors.

AlphaEvolve an evolutionary coding agent built on Gemini 2.0 found a way to multiply 4x4 complex matrices in 48 scalar multiplications: the first improvement on Strassen’s 56-year-old bound. Across 50+ open math problems, it matched the best known solutions 75% of the time and beat them 20% of the time. The recursive part: AlphaEvolve found a 23% speedup on a kernel inside Gemini’s own architecture, cutting Gemini’s training time by 1% and recovering 0.7% of Google’s total compute. Gemini making Gemini faster.

Karpathy’s AutoResearch, released March 7, 2026, is a 630-line Python script that lets an AI agent modify training code, run 5-minute experiments, check results, and iterate. He pointed it at his own highly-tuned “Time to GPT-2” codebase. The agent found about 20 additive improvements that transferred to larger models, cutting the metric by 11%. Shopify CEO Tobi Lutke tried it overnight: 37 experiments, 19% validation improvement, a 0.8B model outperforming a 1.6B one. Sakana AI’s AI Scientist v2 went further and produced the first AI-authored paper accepted through standard peer review. OpenAI said publicly in late 2025 that it’s researching how to safely build AI systems capable of recursive self-improvement. Two years ago this was a thought experiment.

What the hardware decides

The transformer won not because attention was theoretically prettier than recurrence. It won because it parallelized well on GPUs. Whatever comes next has to clear the same bar.

Pre-training scaling for dense transformers is flattening. OpenAI spent at least $500 million per major training run on Orion. The model hit GPT-4 performance after 20% of training; the remaining 80% gave diminishing returns. They downgraded it from GPT-5 to GPT-4.5. Sutskever at NeurIPS 2024: “Pre-training as we know it will end. The data is not growing because we have but one internet.” His startup SSI has raised to a $32 billion valuation with about 20 employees and zero revenue. A bet that the next leap requires something architecturally new.

But test-time compute opened a different axis entirely. OpenAI’s o3 hit 87.5% on ARC-AGI, beating most humans. DeepSeek-R1 matched o1-level reasoning at 70% lower cost. OpenAI’s inference spending reached $2.3 billion in 2024: 15x what they spent training GPT-4.5. Dario Amodei at Morgan Stanley in March 2026: “We do not see hitting the wall. We don’t see a wall.” He’s talking about this axis, inference-time compute and RL from verifiable rewards, not about pre-training bigger dense models. The Densing Law now shows capability per parameter doubling every 3.5 months through better data, MoE, and distillation. Last year’s frontier, matched with a fraction of the parameters.

Inference demand is projected to exceed training demand by 118x. Global data center power is heading toward 945 TWh by 2030, roughly Japan’s total electricity consumption. An architecture that scores 2x better on benchmarks but runs 3x worse at inference won’t win. What ships is whatever fits the hardware. The transformer isn’t going away. It’s becoming one component in a larger stack: attention for recall, SSMs for cheap sequence processing, MoE for capacity, maybe diffusion for parallel output. Jamba, Hymba, and Qwen3-Next already ship this way. That’s not a prediction. It’s what’s in production.

How fast the stack evolves is the open question. The answer, given AlphaEvolve and AutoResearch and AI Scientist v2, is faster than any previous architectural transition. I don’t know whether the transformer remains the dominant layer for two years or five. But I’m fairly confident that whatever comes next, humans won’t have designed it alone.