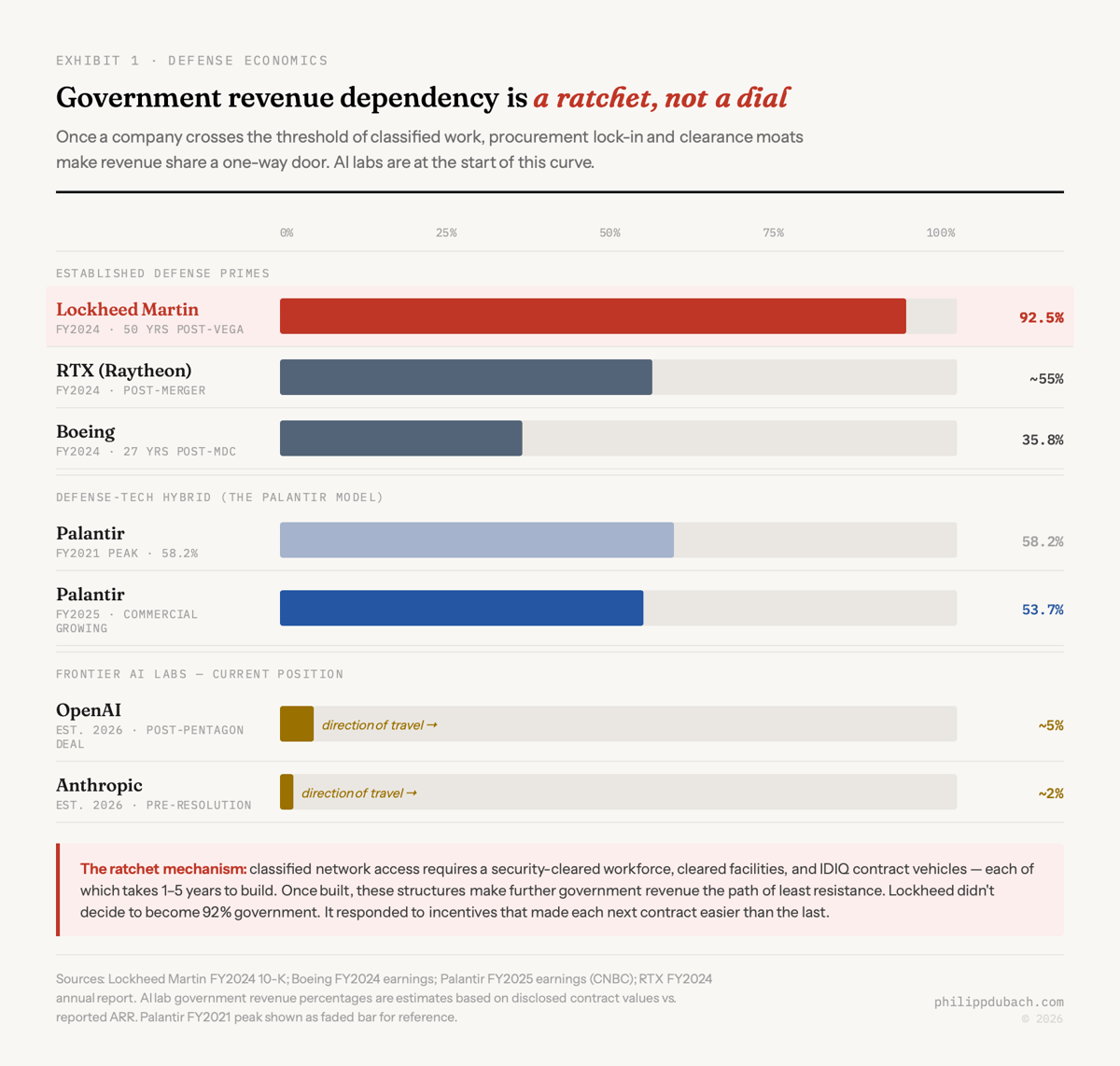

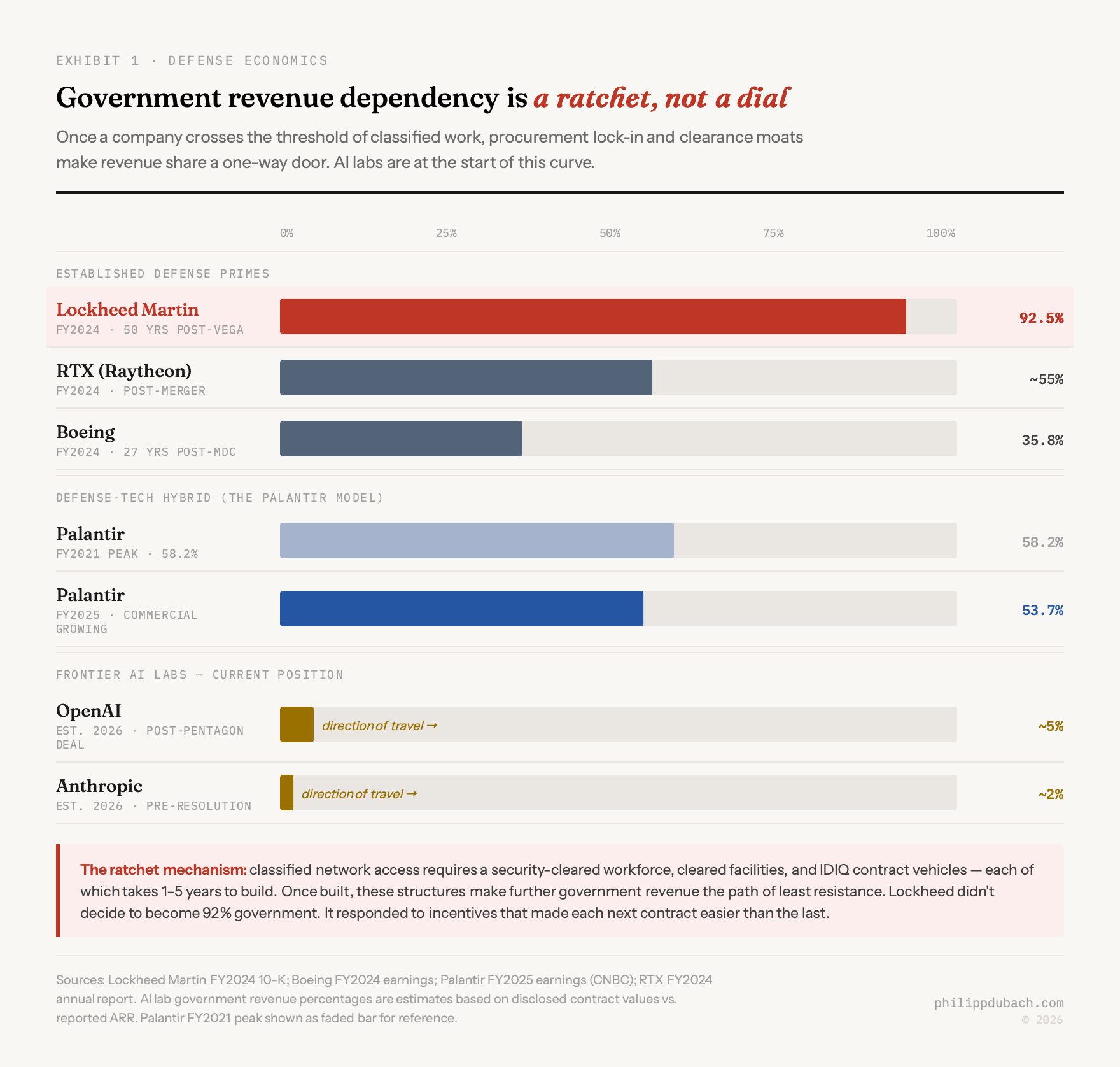

Lockheed started by building Amelia Earhart’s favorite plane. Then came a government loan guarantee in 1971 (the L-1011 TriStar nearly killed the company), a Cold War, decades of consolidation, and now a business that earns 92.5% of its revenue from government contracts, with the F-35 alone accounting for 26% of its $71 billion in annual sales. The process took about 50 years.

AI labs will do it faster.

On February 27, 2026, two things happened within hours of each other. President Trump ordered every federal agency to “IMMEDIATELY CEASE all use of Anthropic’s technology” after CEO Dario Amodei refused to strip safety constraints from Claude’s Pentagon deployment, specifically prohibitions on mass domestic surveillance and fully autonomous weapons. Defense Secretary Pete Hegseth then labeled Anthropic a “Supply-Chain Risk to National Security,” a designation previously reserved for foreign adversaries like Huawei, never before applied to an American company. That evening, Sam Altman announced that OpenAI had signed a deal to deploy its models on the Pentagon’s classified network, posting that the Department of War “displayed a deep respect for safety.” (Whether that reflects the Pentagon’s actual position or Altman’s political optimism, remains unclear for now.)

Most coverage has framed this as an ethics dispute. I think that framing is going to age poorly. What I see is the economics of defense spending doing what they have always done to every company they touch, and the ethics arguments becoming less audible as the financial gravity increases.

The Last Supper precedent

In the summer of 1993, Secretary of Defense Les Aspin and Deputy Secretary William Perry invited the CEOs of America’s defense firms to dinner at the Pentagon and told them, in so many words, that most of them would not survive. Cold War budget cuts meant the government could sustain roughly one prime contractor per equipment category. Norman Augustine, then CEO of Martin Marietta, named it the Last Supper. The message was clear: consolidate or die, and the government would not stop you from consolidating.

The restructuring that followed was fast, even by M&A standards. Within four years, 51 prime defense contractors collapsed into five: Lockheed merged with Martin Marietta in 1995 ($10 billion), Boeing absorbed McDonnell Douglas in 1997 ($13.3 billion), Raytheon folded in Hughes Electronics and Texas Instruments’ defense unit. Between 2011 and 2015, an additional 17,000 U.S. companies exited the defense industry, a contraction that hollowed out the supplier base the Big Five still depend on today.

The revenue dependency data shows what happens to the companies on the inside of that consolidation. Boeing before 1997 was, as Bank of America analyst Ron Epstein put it, “a company where engineers were high church.” Post-merger, Boeing relocated its headquarters from Seattle’s engineering center to Chicago, physically separating leadership from manufacturing. Defense rose to 35.8% of Boeing’s FY2024 revenue ($23.9 billion). The cultural shift that merger carried, financial discipline over engineering judgment, is what most 737 MAX post-mortems eventually trace back to. Companies don’t plan to end up here. They respond to incentives, and the incentives compound.

The AI industry will face the same incentives, just faster, and through a different mechanism: not M&A but access to classified networks and government-funded compute.

What defense money does to a company

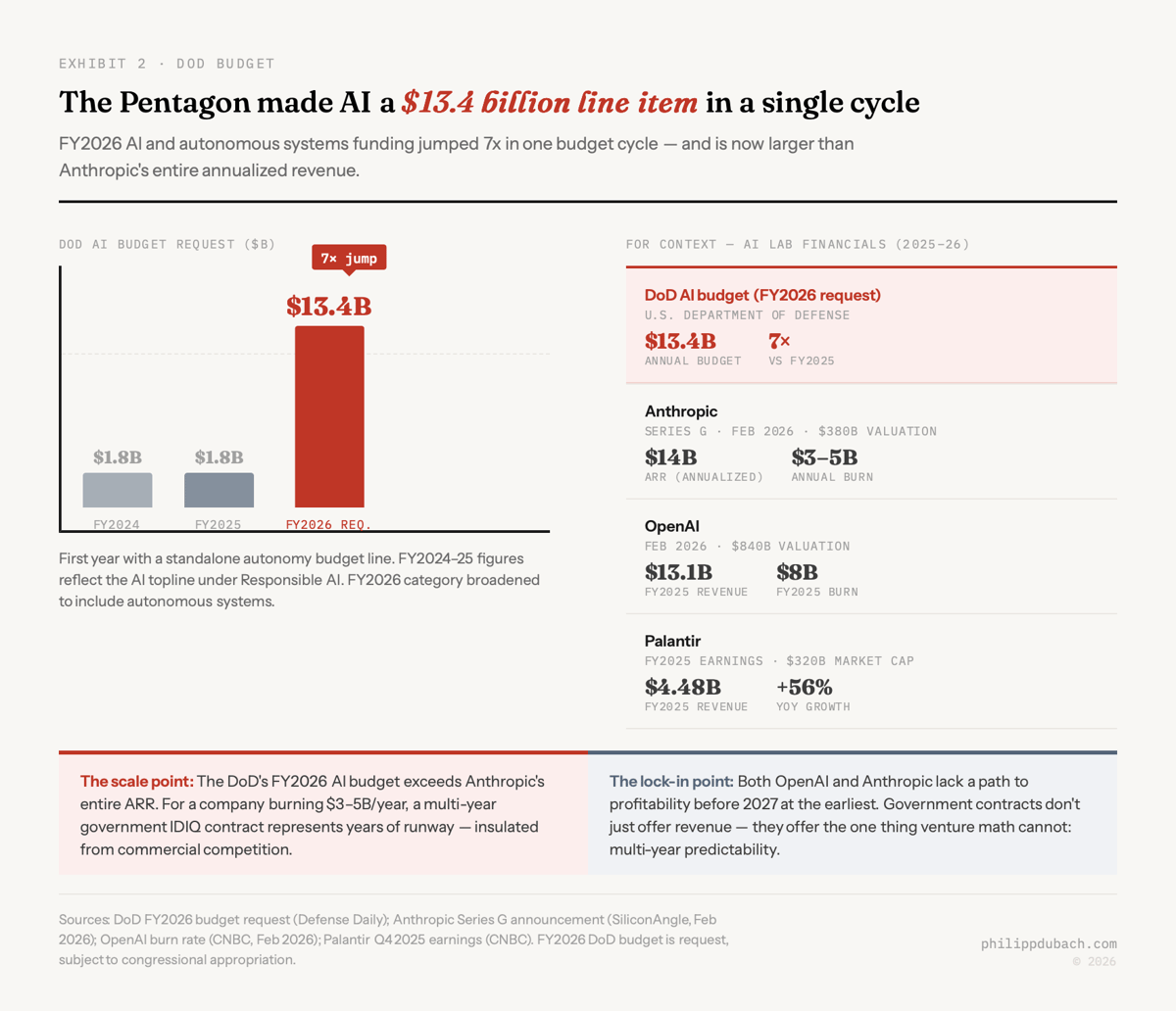

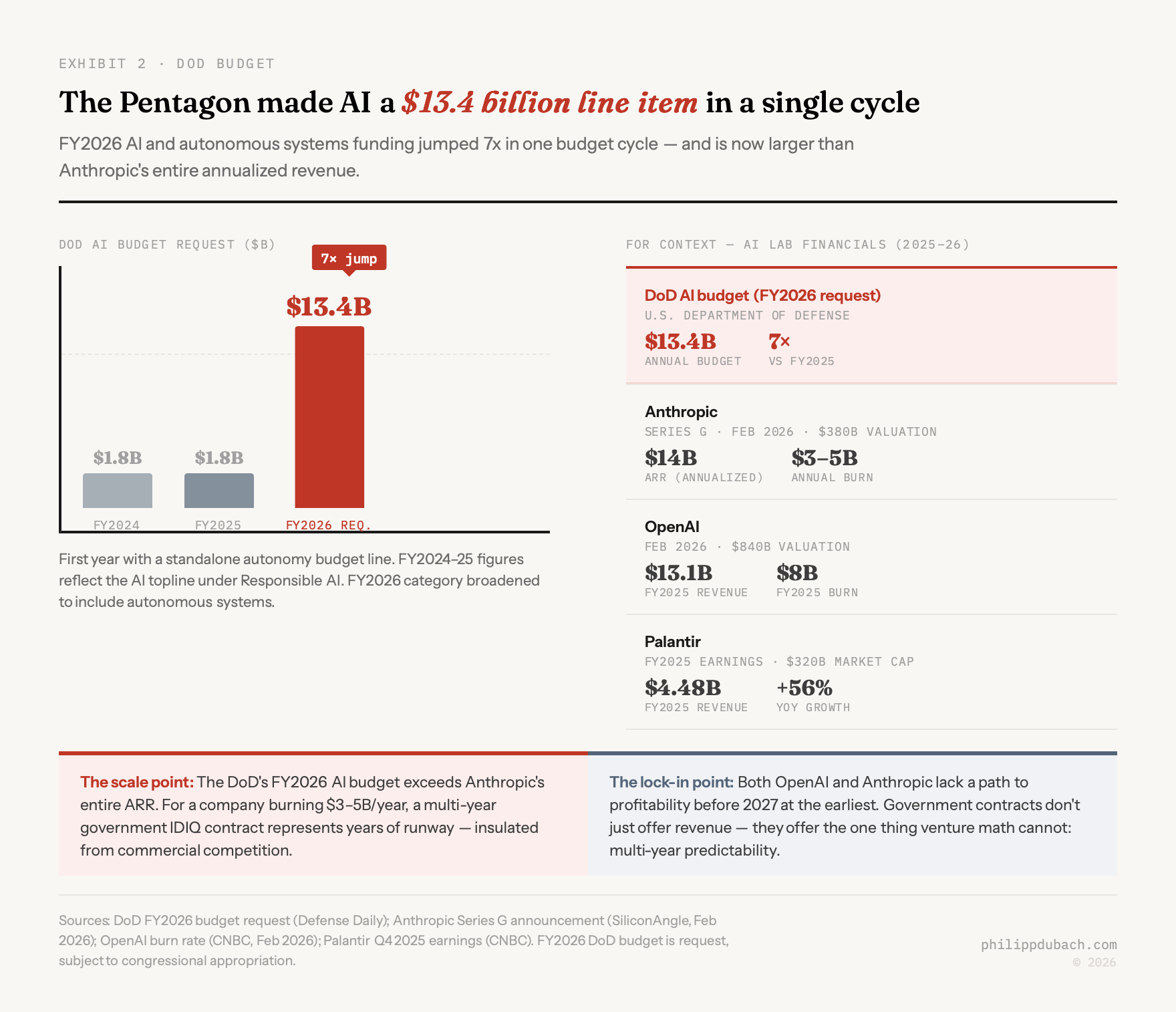

The FY2025 DoD AI budget was $1.8 billion, a figure that nearly everyone involved described as insufficient. The FY2026 budget request earmarks $13.4 billion for AI and autonomous systems, a roughly 7x increase in a single budget cycle, and the first time these technologies have their own standalone line item inside a total defense request of $892.6 billion. For context: Anthropic’s full annualized revenue as of February 2026 was approximately $14 billion. The Pentagon just made AI a budget category larger than most of the companies selling it.

Anthropic burns an estimated $3–5 billion annually; OpenAI burned approximately $8 billion in 2025. Neither has a clear path to profitability before 2027 at earliest. Government contracts offer something consumer businesses cannot: predictable, multi-year, politically protected revenue streams that don’t churn when a competitor releases a better model.

The procurement structures deepen that dependency over time. IDIQ contracts (Indefinite Delivery, Indefinite Quantity), which now account for roughly 56% of DoD contract award dollars, run five years with extension options. Palantir’s Maven Smart System contract started at $480 million and expanded to nearly $1.3 billion through 2029. The JWCC cloud contract, which replaced the cancelled $10 billion JEDI contract, placed over $3.9 billion in task orders within three years of award to AWS, Google, Microsoft, and Oracle. Once embedded in classified systems, switching costs become close to prohibitive. A competitor cannot simply offer better inference speed.

Security clearances are maybe the most underappreciated asset. Processing a clearance takes an average of 243 days end-to-end, up to a year for TS/SCI with polygraph. Only around 4.2 million Americans hold active clearances, roughly 2.5% of the labor force, and an estimated 500,000 to 700,000 cleared positions currently sit unfilled. Average cleared professional compensation hit $119,131 in 2025; full-scope-polygraph holders averaged $141,299. For AI labs accustomed to hiring from MIT, Cambridge, and ETH Zürich, the cleared talent pool is thin and gets more expensive every year.

Any lab serious about classified work has to build a parallel organizational structure: separate hiring pipeline, separate facilities, separate operational security requirements. The lab that builds that structure first has a moat no competitor can cross quickly.

Palantir’s trajectory

The clearest view of where this ends is Palantir, which has been running the experiment at scale for a decade. It posted $4.48 billion in FY2025 revenue, up 56% year-over-year, with government comprising 53.7% of the total, down from a peak of 58.2% in 2021 as its commercial AIP platform gained traction. Its $10 billion U.S. Army Enterprise Agreement in July 2025 consolidated 75 existing software contracts into a single framework. Its market capitalization reached roughly $320 billion by late February 2026, making it worth nearly twice Boeing. The model, government as the client that funds and validates the technology, commercial as the client that justifies the valuation, is what the AI labs are now building toward.

OpenAI at an $840 billion valuation with a classified Pentagon network deal is already further down that road than most coverage acknowledges. It has appointed retired General Paul Nakasone, former NSA director, to its board. It hired Dane Stuckey, who spent a decade at Palantir and served as its CISO for six of those years, as its own CISO. It has active job postings for Government Account Directors in Defense requiring Top Secret clearance and defense revenue targets exceeding $2 million per year.

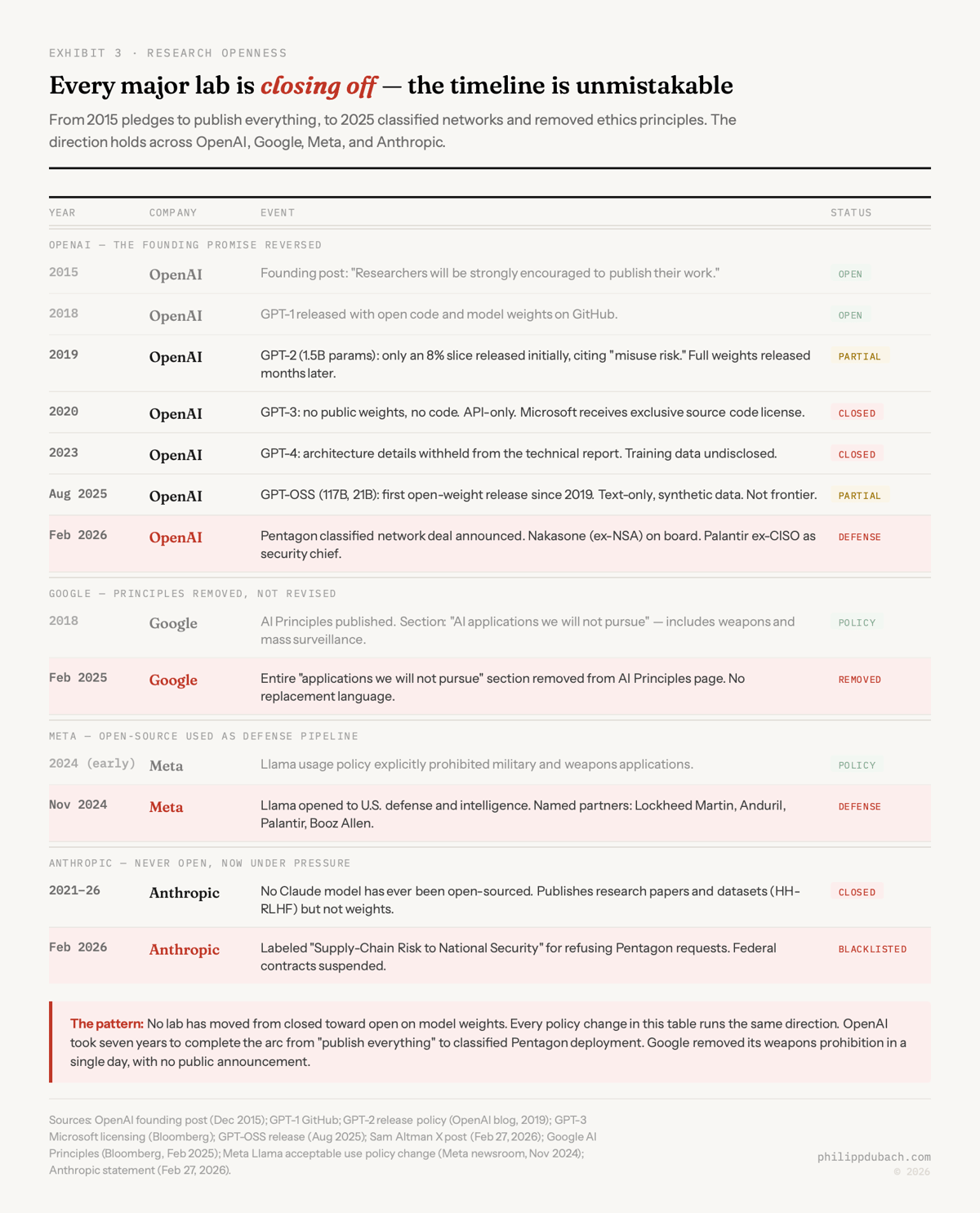

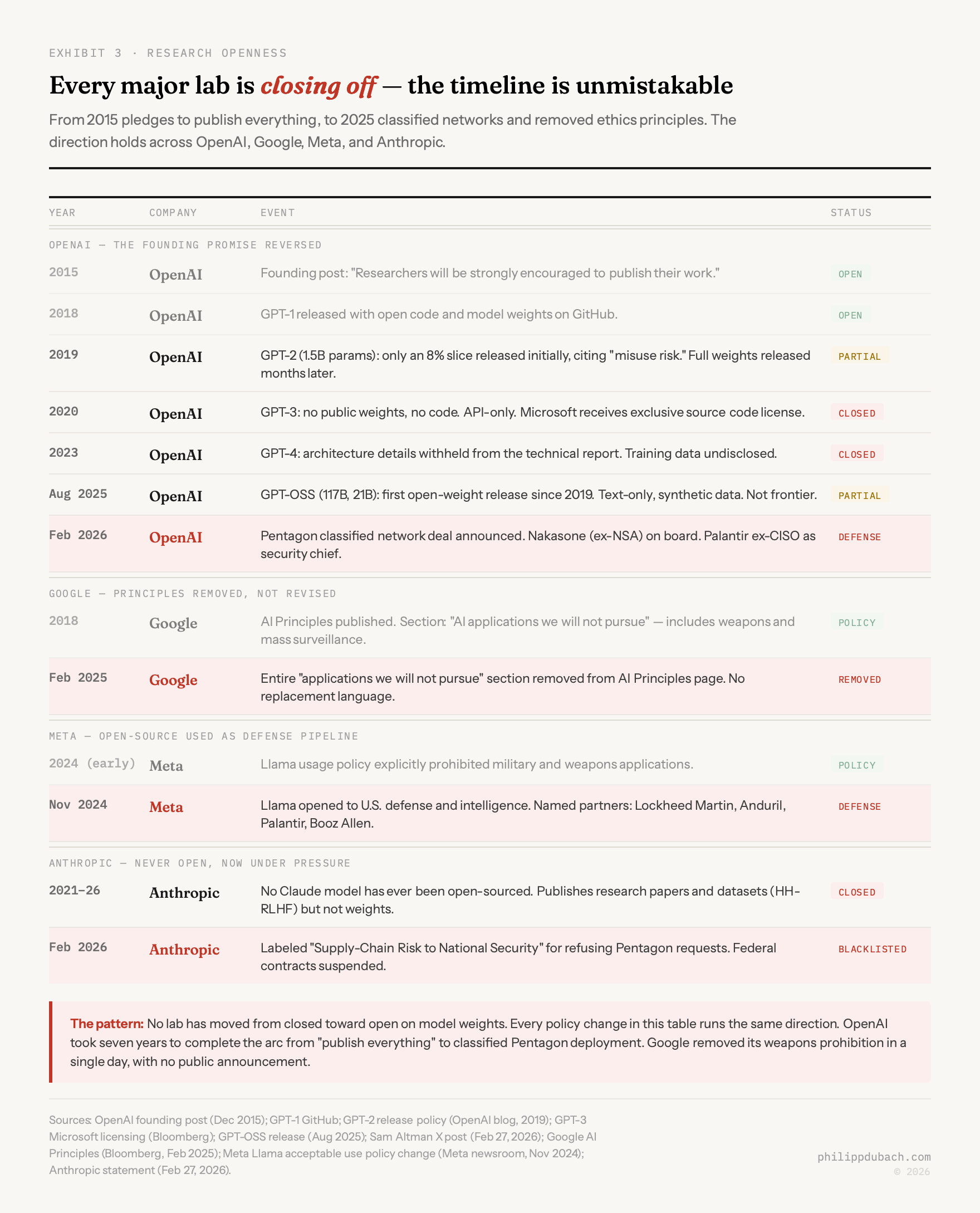

The publishing record is moving the same way. OpenAI’s 2015 founding post promised researchers “will be strongly encouraged to publish their work.” GPT-1 shipped with open-sourced code. GPT-2 was partially withheld in 2019, GPT-3 fully closed in 2020, GPT-4’s architecture undisclosed in 2023. OpenAI released smaller open-source models in August 2025 (its first since GPT-2, six years later) but they were text-only, trained on synthetic data, not frontier systems. Google removed the “AI applications we will not pursue” section from its principles in February 2025, including the explicit weapons prohibition. Meta opened Llama to defense agencies and contractors including Lockheed Martin and Anduril in November 2024. Anthropic has never open-sourced a Claude model. Every major lab is moving in the same direction.

The counterargument, and it’s a real one, is that defense R&D has historically generated civilian spillovers: ARPANET, GPS, jet engines, the semiconductor supply chain. Moretti, Steinwender, and Van Reenen, writing in the Review of Economics and Statistics (2025), found that a 10% increase in government-funded defense R&D generates a 5–6% increase in privately funded R&D in the same industry: crowding-in, not crowding-out. The estimated total effect: U.S. private R&D investment is $85 billion higher than it would be without government defense spending.

But there’s a difference between how much research gets done and what it gets pointed at. Lockheed’s R&D is now probably almost entirely classified hypersonics and directed-energy weapons. What it learns there does not flow back to commercial applications in any useful timeframe. The research volume expands; the scope narrows. Bell Labs devoted a substantial share of its personnel to government contracts at its Cold War peak; the 1956 AT&T Consent Decree forced royalty-free patent licensing on the transistor, which accidentally accelerated the civilian semiconductor industry by giving Texas Instruments and Fairchild Semiconductor access to the core technology. AI labs operating under classification will not be forced to open-license anything. That mechanism does not exist for software under ITAR.

I’m more confident in the direction of this analysis than in the timeline. The Anthropic supply-chain-risk designation may not survive legal challenge. The $13.4 billion FY2026 AI budget might not survive unchanged. Amodei might find a compromise that others in the industry treat as a ceiling rather than a floor. What I don’t think reverses is the structural pull. The defense budget is the largest single purchaser of advanced technology on earth, it’s growing, it operates on multi-year contract cycles that reward incumbents, and it is willing to use blunt regulatory tools against companies that don’t cooperate, as Anthropic learned in about six hours on February 27.

The Last Supper logic applies here too: the government will not block consolidation, and it will not save the companies that don’t participate. It will just find a different partner who will.